From innovation to production with ComfyUI

May 17, 2026

A cross-platform AI video creation tool for storytelling with persistent characters who can talk.

Role: Design Engineer (originating designer and primary experimental-path operator) — or refined variants we discussed: "Proposer, architect, and primary experimental-path operator" or "Originator, architect, primary operator (experimental path)"

Timeframe: 2023–2026 (3 years)

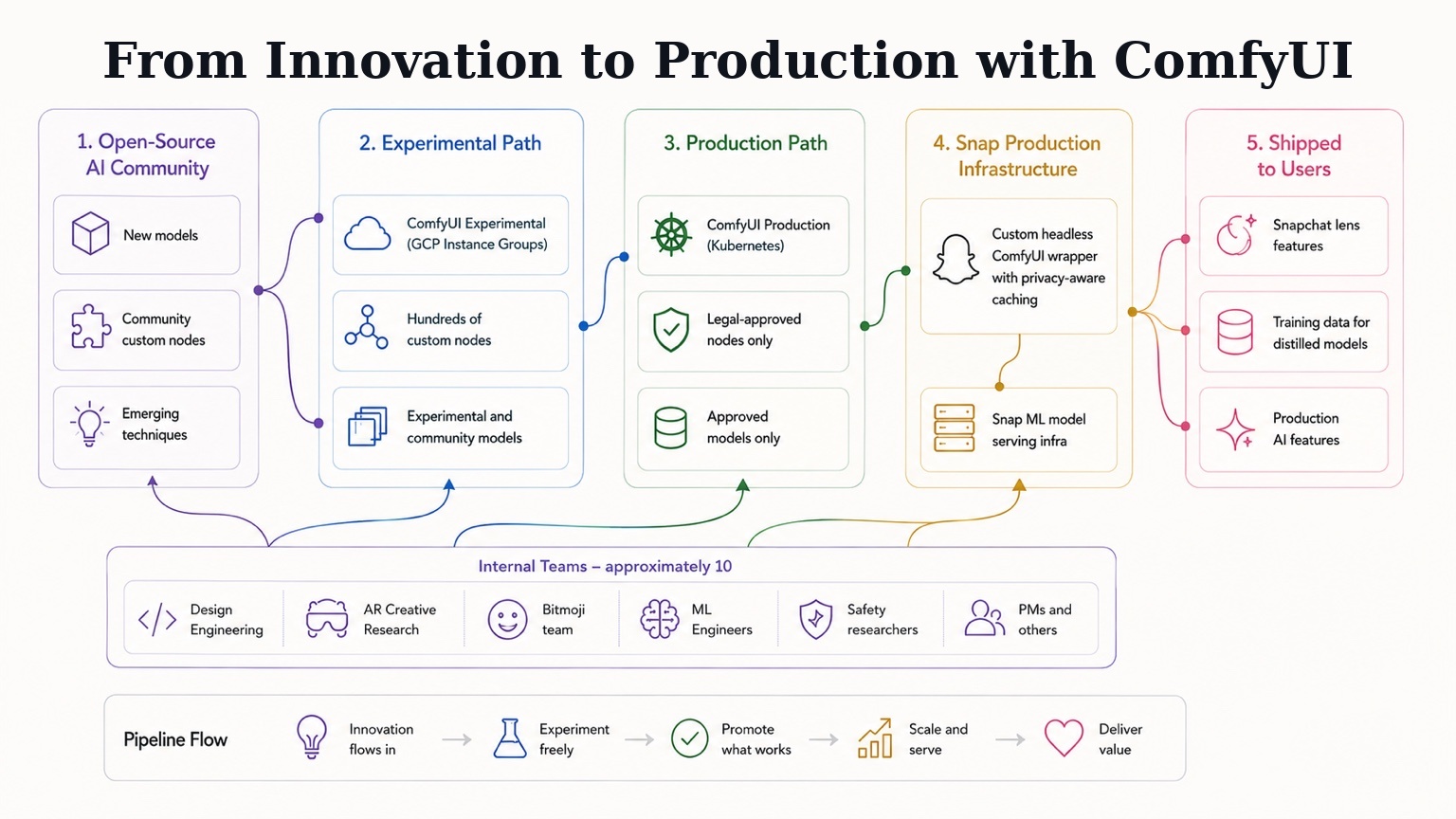

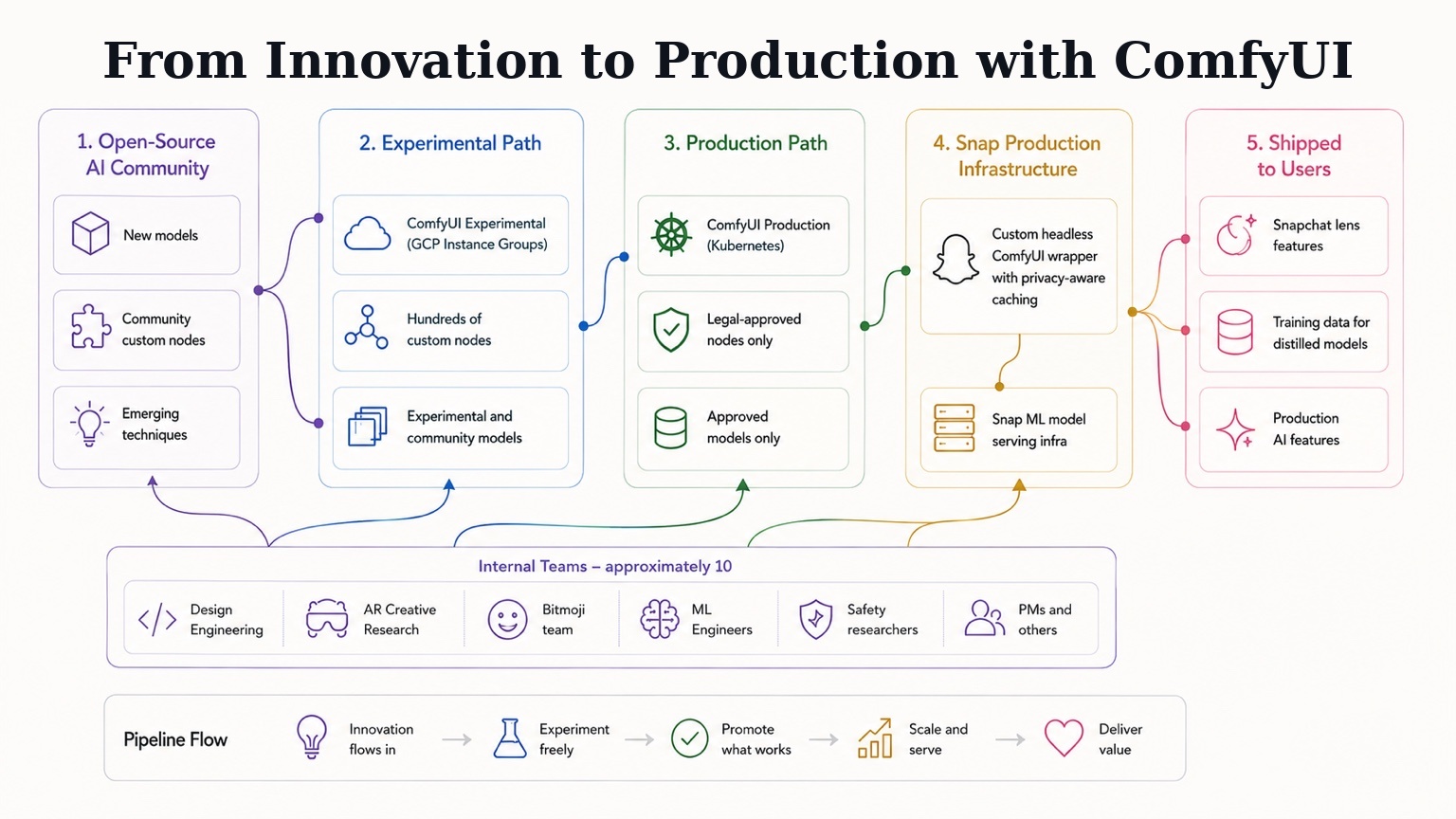

Scale: ~10 teams at peak, hundreds of custom nodes, multiple shipped Snapchat lens features

Tech: ComfyUI, GCP, Kubernetes, Docker, Python, Pytorch, Diffusers, Firebase, custom ComfyUI wrapper for production deployment

TL;DR

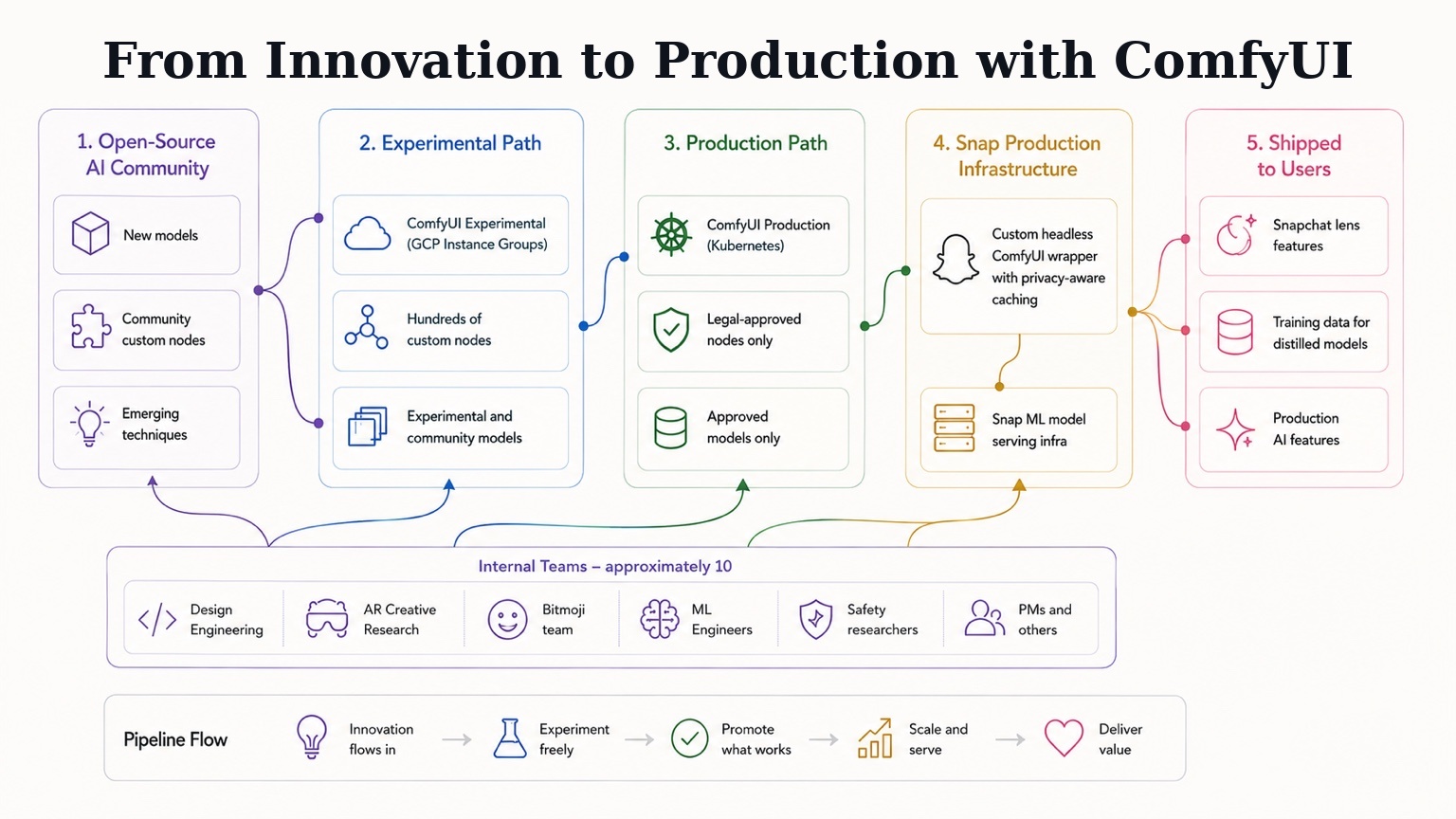

- Proposed and built Snap's internal ComfyUI platform with a dual-path architecture (experimental + production-approved) that bridged the gap between the open-source generative AI community and Snap's ML engineering, enabling the same tool to serve discovery, prototyping, and production deployment.

- Platform served roughly ten teams across three years, including Design Engineering, AR Creative Research, the Bitmoji team, ML researchers, and safety researchers — powering shipped Snapchat lens features, training data generation for distilled real-time models, and the early prototyping phase of products like Easy Lens (now Snap's standalone lens creation app).

- Built and maintained hundreds of custom nodes, custom production infrastructure with privacy-aware caching, team-specific tooling and templates, and the foundation that supported Snap Productions — a multi-clip AI video creation suite whose architecture extended this platform's design.

The disconnect

When generative AI took off with Stable Diffusion and ChatGPT in late 2022, two communities formed around it that barely talked to each other.

On one side were ML researchers and engineers — people who read the papers, understood the underlying architectures, and built the production systems that put models in front of users. On the other side was the open-source creative AI community: artists, designers, technologists, and curious newcomers who'd downloaded Automatic1111 or ComfyUI and were stitching together capabilities through extensions, custom nodes, and shared workflows. The OSS community moved fast — sometimes faster than the research-to-product pipelines inside large companies — because it was unconstrained by infrastructure decisions, model licensing concerns, or production engineering timelines.

At Snap, these two worlds existed inside the same building but rarely intersected. Most ML researchers and engineers I worked with had heard little of A1111 or ComfyUI. Meanwhile, a few of us on the design engineering side were using these tools daily — discovering capabilities through community workflows, building prototypes that demonstrated what was possible, and trying to translate those discoveries back to ML teams in a form they could productionize.

The result was three persistent problems:

- ML teams discovered new capabilities slowly. Techniques that were already commonplace in the OSS community took months to surface inside research and production teams.

- Productionizing a feature took a long time. Every workflow had to be reimplemented from scratch in optimized form by ML engineers, even when a working version already existed in ComfyUI.

- What the community could already do, we often couldn't. The OSS world had functional solutions for things like multi-character generation, controllable composition, and identity preservation — but those didn't reach Snap's products until ML teams could build their own versions.

A concrete example: a project we called Dreams.

Dreams was a feature where we'd generate creative images of users in different scenes. The initial implementation fine-tuned a complete Stable Diffusion model per user — expensive and slow. While that approach was the production path, I was experimenting with newer techniques from the OSS community. I trained dozens of smaller LoRA adapters using fewer images, faster and cheaper. For a stretch in 2023, I might have been the person at Snap with the most hands-on LoRA training experience, almost entirely because the OSS community had figured out how to do it and ML teams were focused elsewhere.

Then IP-Adapter emerged from the OSS world. I built workflows and prototypes with it, shared them with ML teams, and the company eventually moved away from expensive fine-tuning toward IP-Adapter for identity preservation.

But Dreams was for Snapchat — a product fundamentally about friends. So the natural next question was: how do you generate images with you and your friends? ML teams didn't have an answer. The OSS community, working through attention masks and segmentation models like Segment Anything (SAM), already did. I built ComfyUI workflows combining IP-Adapter, attention masks, and SAM-based segmentation for multi-character generation, shared them with ML engineers, and they integrated the techniques into their Diffusers pipelines for production.

This pattern — design engineers discovering capabilities in OSS, prototyping in ComfyUI, hand-carrying the insights to ML teams who then reimplemented them in production form — worked, but slowly.

Every cycle took weeks or months. Worse, the prototyping environment and the production environment were entirely different stacks: the OSS world for discovery, hand-coded Diffusers pipelines for production. Nothing flowed cleanly between them.

That's the gap the platform would eventually close.

The proposal

By mid-2023, I had been operating in that pattern — discovered OSS models and techniques, prototype in ComfyUI, hand off to ML teams — long enough to see its limits clearly. Every cycle worked, but every cycle was also a lossy translation. Workflows that ran fine in ComfyUI had to be reimplemented in production-grade Python by ML engineers. Discoveries that took an afternoon in the OSS world took months to ship inside the company. And the prototyping environment and the production environment shared almost no DNA.

The proposal I wrote was simple: what if the same tool we used for discovery, prototyping, and design could also be the tool we used for production?

The case for this was harder to make than it sounds. ComfyUI was, to most ML engineers at the time, a hobbyist tool — a fun way to play with diffusion models, not a serious production substrate. The standard production stack was hand-coded Diffusers pipelines, optimized end-to-end by ML engineers who knew the models deeply. Suggesting that ComfyUI could sit alongside that stack — not replacing it, but offering an additional path from inception to production for certain kinds of workflows — required making the case carefully.

The argument came down to four points:

The OSS community had momentum we couldn't match internally. Hundreds of new extensions appeared each month. New model architectures had ComfyUI support within days of release. The community was effectively doing applied AI R&D at a velocity no single ML team could replicate, and ComfyUI was where most of that velocity landed first.

The node-based architecture was a feature, not a workaround. ComfyUI forced users to understand the underlying components of a generative pipeline — encoders, samplers, conditioning, control mechanisms. That made it a genuinely good prototyping environment for cross-functional teams: designers and researchers and engineers could read the same graph and understand what was happening.

Extensibility meant we could build production-grade infrastructure on top. ComfyUI's extension system let us add the things production needed — proper caching, privacy-aware execution, integration with Snap's existing model serving infrastructure — without forking the tool itself.

The performance concerns were real but engineerable. Yes, running ComfyUI as a production substrate had overhead that hand-coded pipelines didn't. But for the right workflows — especially those that benefited from rapid iteration and OSS community velocity — the tradeoff was worth it. And for cases where it wasn't, ML teams could still take a workflow proven on the platform and reimplement it in their optimized pipelines. The platform wouldn't replace existing production paths; it would add a new one optimized for speed from inception to user.

I put this in a slide deck and pitched it. The proposal got buy-in, and the work began. I designed the dual-path architecture and owned the experimental path. A design engineering partner joined to lead the production path, with dedicated ML engineering support for the integration with Snap's production infrastructure. He ran point on the production side — including the meetings I couldn't always attend from Tokyo — which let me focus on what I did best: the experimental environment where discovery happened, and the platform-wide work of keeping both paths in sync.

That partnership turned out to be one of the most valuable parts of the project. The platform succeeded because it had two committed owners — one of us close to the OSS community and the prototyping work, one of us close to production infrastructure — building toward a shared design.

The dual-path architecture

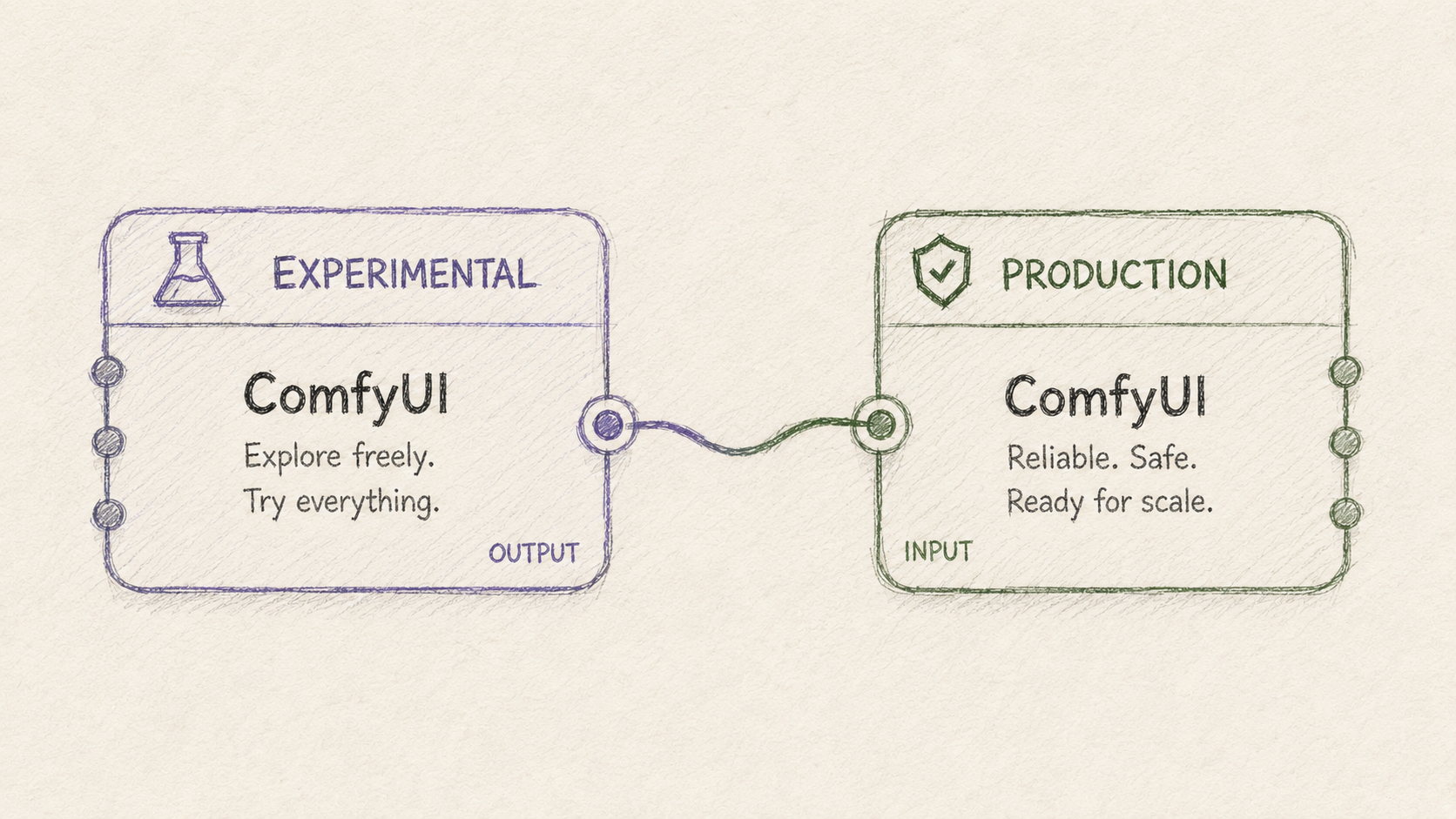

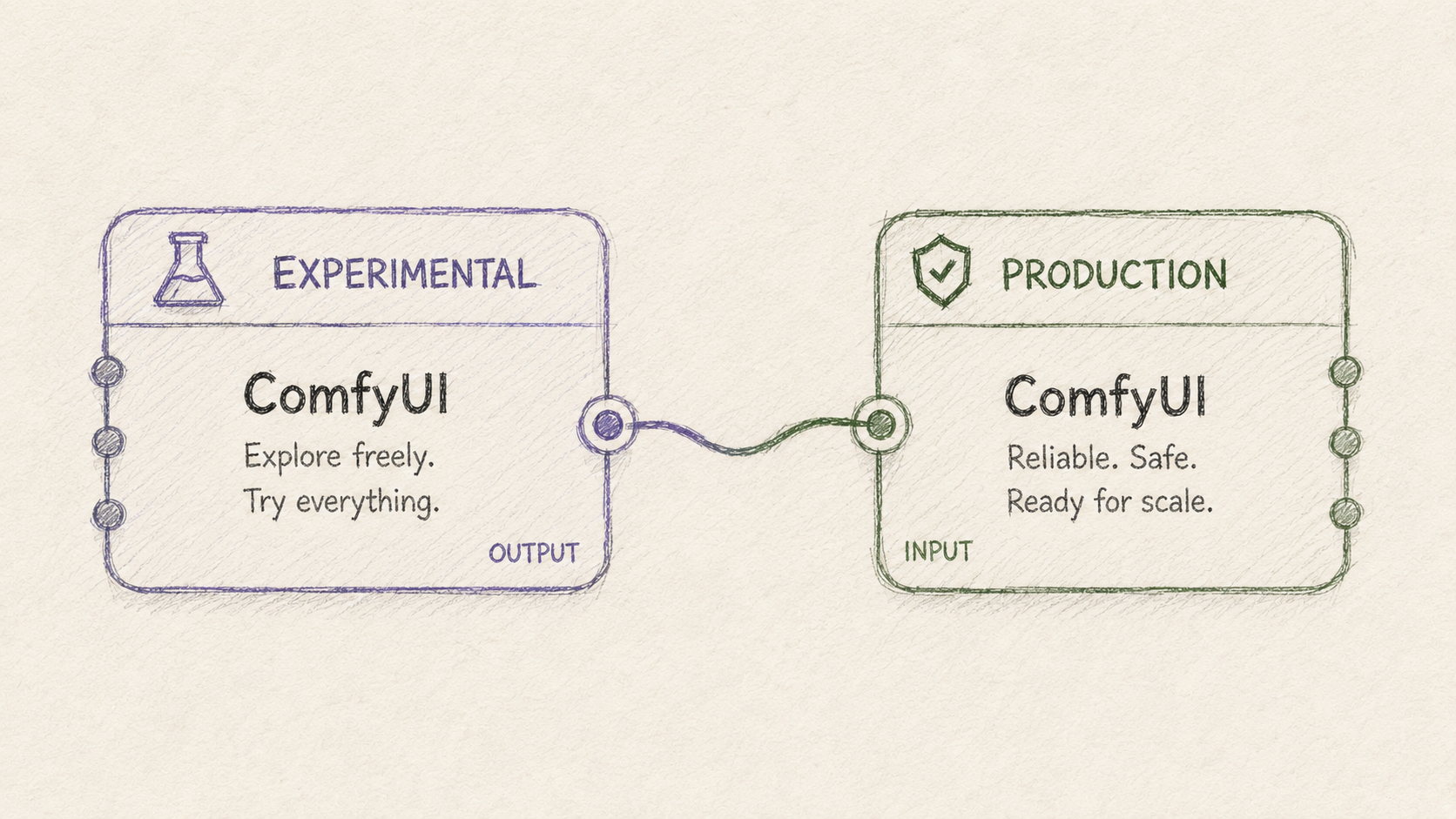

The platform was designed around a single conceptual move: separating exploration from production while keeping them mechanically aligned.

Two ComfyUI deployments ran in parallel, each serving a different purpose, but built from the same underlying image so that workflows could move between them with minimal translation.

The experimental path was open. Anything could be installed: community custom nodes, experimental models, in-development extensions, half-working integrations. I maintained it as the primary user, adding new nodes and capabilities as the OSS community released them and as internal teams requested specific tools. This was where discovery happened — where design engineers, AR creative researchers, the Bitmoji team, ML engineers, safety researchers, and eventually product managers came to try things, prototype features, and learn what was possible. It ran on GCP instance groups, with session caching that mostly kept the same user on the same server through a workflow.

The production path was hardened. Only legally approved nodes and models were installed. Everything had been vetted for licensing, safety, and dependency stability. The production path served as a testable endpoint that exactly matched the real production infrastructure — same Docker image, same configuration — so that a workflow validated here could be expected to behave the same way when deployed to actual production traffic.

Behind the production path sat the real production infrastructure: a custom ComfyUI wrapper, built on top of Snap's existing ML model serving infrastructure, that ran workflows headless without the UI. This wrapper had its own caching implementation — critical for keeping models in memory across requests while expelling user-generated content after execution, which mattered for privacy compliance. The production path eventually moved from GCP instance groups to Kubernetes, around a year before I left.

The promotion pathway between experimental and production was the conceptual core of the whole design. A workflow could start as an exploration on the experimental path. If it proved valuable, we'd identify the specific nodes and models it depended on, run those through legal review, and add them to the production path. Once the workflow ran cleanly on production, it could be deployed to real users. The platform didn't just provide two environments — it provided a journey from inception to production with consistent tooling at every stage.

Beyond the two paths themselves, several pieces of platform infrastructure made the system actually usable:

- A custom ComfyUI shell UI that wrapped the standard ComfyUI interface with features the team needed: workflow management, simplified input/output views for teams who didn't want the full node graph complexity, and high-level abstractions for common operations. Many of these features were eventually built into ComfyUI itself by the upstream team, at which point we removed our versions.

- Lens Studio integration through specialized project templates, letting design engineers and lens creators move from a ComfyUI workflow to a deployable Snapchat lens through a structured pipeline.

- Deployment tools for the AR Creative Research team that let them upload workflows and use the production path to generate large batches of test images with various prompts and inputs, which could then be safety-screened and considered for lens deployment.

- Specialized resources for the Bitmoji team, including dedicated server allocations, custom extensions, and integration with their existing creative tools (notably community-built nodes that connected ComfyUI to local Blender instances, letting them model basic scenes and bring them to life with generative AI).

The custom node work was substantial. Across the three years, I built hundreds of custom nodes for the platform — production-integration nodes for headless execution with binary inputs and outputs (since the UI's caching behavior wasn't appropriate for production), wrappers around LLM and VLM models from various providers, Firebase integration nodes for image, audio, text, and video assets, utility nodes that filled gaps in the community ecosystem, and stable replacements for community nodes that weren't reliable enough for our use cases. Many of these nodes were eventually consumed by Snap Productions, the AI video creation platform I built on top of this infrastructure, where the architecture expanded significantly to support new requirements. (See the Snap Productions case study for that next-generation work.)

By the time I left, the platform had been running for about three years, served roughly ten teams at varying levels of intensity, and had become the primary discovery and prototyping environment for generative AI work at Snap.

What it enabled

The platform's value showed up most clearly in the specific things it made possible. Five examples span the range:

Shipped Snapchat lens features. Several Snapchat lenses ran on workflows built and tested on the platform. The AR Creative Research team, in particular, adopted ComfyUI as a primary tool for prototyping new lens concepts. They'd build workflows in the experimental environment, generate large batches of test images using their prompts and various input photos, get those screened for safety, and — for workflows that passed — deploy through specialized Lens Studio templates I'd built for integrating ComfyUI workflows into the lens pipeline. The platform changed how that team worked: rather than waiting for ML teams to build a capability, they could explore and produce concepts directly, with my team handling the deployment infrastructure underneath.

Training data generation for distilled real-time models. This was one of the platform's most valuable use cases, and one that wouldn't have happened without it. Snap's ML research team had been developing distilled stable diffusion models — small, fast, single-purpose models designed for real-time inference and capable of on-device deployment. Each distilled model needed a large, carefully-varied training dataset of input-output pairs from a specific source workflow. The platform was where those datasets got generated.

The pattern worked like this: an ML engineer or design engineer would build a ComfyUI workflow that did a specific thing well — a particular style transfer, a specific character transformation, a constrained generation with ControlNet conditioning. The workflow could be anything that worked with a consistent input (a face, a person in roughly the same position) and produced the desired effect. Then the platform would generate thousands of variations: different prompts, different reference images, different conditioning inputs, producing a training set that captured the full behavior space of the source workflow. The distillation team would use that data to train a tiny model that ran the same effect in real time.

We even opened a beta version of this capability to external creative makers and lens creators — they could build workflows in our version of ComfyUI and generate training data for their own specialized models. This was a meaningful expansion: not just internal teams using the platform, but external creators participating in the same infrastructure.

The ML engineering work of designing the distillation pipelines and optimizing the resulting models was substantial in its own right. The platform's contribution was making the data side of that effort tractable — producing the volume and variation of training data that distillation required, without each distillation project becoming a custom data engineering effort.

Bitmoji team workflows. The Bitmoji team became one of the platform's heaviest users, eventually getting dedicated server allocations and custom extensions to support their work. Their workflows ranged across the spectrum: generating clothing concepts for Bitmoji avatars, placing Bitmojis in generated scenes, exploring new visual styles for character rendering. I supported them through a biweekly working session with their primary workflow author — typically one person who'd build a workflow that two or three other team members would then use, varying prompts and inputs to produce specific creative outputs. This pattern repeated across other teams: a small number of platform-fluent users built workflows that scaled to broader team usage.

One distinctive piece of their work was integrating ComfyUI with local Blender instances — community-built nodes let them model a basic 3D scene in Blender and bring it to life with generative AI through ComfyUI. We helped a couple of their heavy users with the setup and the mechanisms to make it work cleanly with our server-hosted ComfyUI instances. It was one of the more creative workflows on the platform and the kind of thing that's hard to plan for in advance — the platform succeeded because it could absorb unexpected use cases like this when they emerged.

Early Easy Lens prototyping. Easy Lens — now Snap's standalone app that replaced the mobile edition of Lens Studio — used the platform during its early prototyping phase. The early versions of that system used ComfyUI workflows orchestrated by AI agents to generate lens concepts from user prompts. The platform served as the foundation that let that team prototype the concept quickly and prove the agent-driven approach worked before the product matured into its current form. It's a useful illustration of the platform's intended arc: accelerate early-stage work, then let products graduate to their own dedicated architectures when scale or specialization demands it.

Fine-tuning Qwen Image Edit on Bitmoji data. This was a smaller piece of work that captures the economics dimension of what the platform enabled. In late 2025, the broader Snap ecosystem had successful experiences built on Nano Banana (Gemini's image editing capability), but the API costs constrained how aggressively those experiences could grow. Looking for cheaper alternatives that could handle Bitmoji-specific use cases, I trained a custom LoRA on Qwen Image Edit using a dataset I generated and curated entirely through the platform: Bitmoji avatars in various scenes, wearing specific clothes, holding referenced objects, with multiple characters in single images.

The training data could only come from the kind of varied generation work the platform made easy — thousands of carefully-conditioned Bitmoji images, generated through ComfyUI workflows that combined multiple Bitmoji reference inputs into single composed scenes. The resulting model handled multi-character composition, clothing reference transfer, and identity preservation for Bitmoji-specific use cases. The work demonstrated the platform's value end-to-end: it made the training data generation tractable, and it produced a working model at meaningfully lower inference cost than the API-based alternatives.

This kind of work — exploring whether a cheaper internal model could replace an expensive external API for a specific use case — is the design engineering pattern in microcosm. The job is to prototype the path and prove the approach works. Production execution and final shipping decisions belong to others.

What I learned

Three years of operating this platform taught me things about how applied AI tooling actually succeeds inside organizations — lessons that I think generalize beyond the specifics of ComfyUI or Snap.

The most valuable AI platforms bridge communities that don't naturally talk to each other. The disconnect between ML research/engineering and the OSS creative AI community wasn't unique to Snap. Most large companies have a version of this divide, and it gets larger as the OSS ecosystem accelerates. The companies that ship AI features fastest will be the ones that close this gap structurally — by building platforms where research velocity, production rigor, and creative experimentation can coexist on shared infrastructure. The technical work is real, but the harder work is organizational: convincing both communities that the other has something to offer, and building tooling that respects how each one actually works.

Designing for multiple personas is harder than designing for one, and it's the whole point. The platform served design engineers, ML researchers, AR creative researchers, the Bitmoji team, the safety team, and eventually PMs. Each had different needs, different fluency levels, different mental models. A platform that served only one of these audiences would have been simpler to build but would have had a fraction of the impact. The interface decisions — the simplified UI shell, the workflow templates, the integration points with Lens Studio, the team-specific extensions — were all about making the same underlying infrastructure usable by people who didn't share a single way of working. That kind of design isn't optional in a real platform; it's the work.

Open-source momentum is engineering velocity. The reason the platform stayed valuable for three years is that ComfyUI itself kept getting better, and the surrounding ecosystem kept producing capabilities the platform could absorb. Every new model release, every new technique, every new community node was something the platform could potentially adopt — sometimes within days. Trying to build all of that internally would have meant constantly falling behind. Building on top of a thriving OSS substrate meant the platform's capabilities grew without the platform team having to grow proportionally. That tradeoff — accepting some dependency on the OSS community in exchange for absorbing its velocity — was the single most important architectural bet, and it paid off.

The "prototype and hand off" pattern is a force multiplier when the platform supports it. My job wasn't to ship every feature myself. It was to prove approaches worked, build the workflows that demonstrated them, and hand off to teams who could own the production execution. The platform made this pattern tractable — handoff is much easier when both sides are working with the same tooling, the same workflows, and the same underlying infrastructure. Without the platform, every handoff involved translation. With it, the handoff was closer to a clean transfer of artifacts that already worked.

Platforms succeed when they enable graduation, not when they prevent it. This is the lesson I came to most slowly. Early on, I assumed the success metric was "more workflows running on the platform forever." What I came to understand is that the right metric is closer to "more things that started on the platform and grew into something dedicated when they needed to." Easy Lens graduating from ComfyUI workflows into its own standalone app is the pattern working correctly. Snap Productions outgrowing the original infrastructure and prototyping its own next-generation architecture is the same pattern. A platform that accelerates the early stages and then steps out of the way when something has earned dedicated infrastructure is doing its job. A platform that tries to hold onto everything it ever served becomes a constraint.

The two-communities dynamic is evolving, and that's worth understanding. When the platform started in 2023, the gap between ML researchers and the OSS creative AI community was real and large. By 2026, that gap has narrowed considerably. Most major OSS model releases now include ComfyUI support out of the box, or have community-built support within days. AI terminology has bled into general engineering conversation. Researchers at companies like Snap now know what ComfyUI is, even if they don't use it daily. The tent for people interacting and working with ML models has gotten much bigger, and the boundaries between research, engineering, design, and creative practice are softer than they were three years ago. I expect this trend to continue — and I expect the next generation of AI platforms will be designed for a world where that integration is the starting point, not the destination.

From innovation to production with ComfyUI

May 17, 2026

A cross-platform AI video creation tool for storytelling with persistent characters who can talk.

Role: Design Engineer (originating designer and primary experimental-path operator) — or refined variants we discussed: "Proposer, architect, and primary experimental-path operator" or "Originator, architect, primary operator (experimental path)"

Timeframe: 2023–2026 (3 years)

Scale: ~10 teams at peak, hundreds of custom nodes, multiple shipped Snapchat lens features

Tech: ComfyUI, GCP, Kubernetes, Docker, Python, Pytorch, Diffusers, Firebase, custom ComfyUI wrapper for production deployment

TL;DR

- Proposed and built Snap's internal ComfyUI platform with a dual-path architecture (experimental + production-approved) that bridged the gap between the open-source generative AI community and Snap's ML engineering, enabling the same tool to serve discovery, prototyping, and production deployment.

- Platform served roughly ten teams across three years, including Design Engineering, AR Creative Research, the Bitmoji team, ML researchers, and safety researchers — powering shipped Snapchat lens features, training data generation for distilled real-time models, and the early prototyping phase of products like Easy Lens (now Snap's standalone lens creation app).

- Built and maintained hundreds of custom nodes, custom production infrastructure with privacy-aware caching, team-specific tooling and templates, and the foundation that supported Snap Productions — a multi-clip AI video creation suite whose architecture extended this platform's design.

The disconnect

When generative AI took off with Stable Diffusion and ChatGPT in late 2022, two communities formed around it that barely talked to each other.

On one side were ML researchers and engineers — people who read the papers, understood the underlying architectures, and built the production systems that put models in front of users. On the other side was the open-source creative AI community: artists, designers, technologists, and curious newcomers who'd downloaded Automatic1111 or ComfyUI and were stitching together capabilities through extensions, custom nodes, and shared workflows. The OSS community moved fast — sometimes faster than the research-to-product pipelines inside large companies — because it was unconstrained by infrastructure decisions, model licensing concerns, or production engineering timelines.

At Snap, these two worlds existed inside the same building but rarely intersected. Most ML researchers and engineers I worked with had heard little of A1111 or ComfyUI. Meanwhile, a few of us on the design engineering side were using these tools daily — discovering capabilities through community workflows, building prototypes that demonstrated what was possible, and trying to translate those discoveries back to ML teams in a form they could productionize.

The result was three persistent problems:

- ML teams discovered new capabilities slowly. Techniques that were already commonplace in the OSS community took months to surface inside research and production teams.

- Productionizing a feature took a long time. Every workflow had to be reimplemented from scratch in optimized form by ML engineers, even when a working version already existed in ComfyUI.

- What the community could already do, we often couldn't. The OSS world had functional solutions for things like multi-character generation, controllable composition, and identity preservation — but those didn't reach Snap's products until ML teams could build their own versions.

A concrete example: a project we called Dreams.

Dreams was a feature where we'd generate creative images of users in different scenes. The initial implementation fine-tuned a complete Stable Diffusion model per user — expensive and slow. While that approach was the production path, I was experimenting with newer techniques from the OSS community. I trained dozens of smaller LoRA adapters using fewer images, faster and cheaper. For a stretch in 2023, I might have been the person at Snap with the most hands-on LoRA training experience, almost entirely because the OSS community had figured out how to do it and ML teams were focused elsewhere.

Then IP-Adapter emerged from the OSS world. I built workflows and prototypes with it, shared them with ML teams, and the company eventually moved away from expensive fine-tuning toward IP-Adapter for identity preservation.

But Dreams was for Snapchat — a product fundamentally about friends. So the natural next question was: how do you generate images with you and your friends? ML teams didn't have an answer. The OSS community, working through attention masks and segmentation models like Segment Anything (SAM), already did. I built ComfyUI workflows combining IP-Adapter, attention masks, and SAM-based segmentation for multi-character generation, shared them with ML engineers, and they integrated the techniques into their Diffusers pipelines for production.

This pattern — design engineers discovering capabilities in OSS, prototyping in ComfyUI, hand-carrying the insights to ML teams who then reimplemented them in production form — worked, but slowly.

Every cycle took weeks or months. Worse, the prototyping environment and the production environment were entirely different stacks: the OSS world for discovery, hand-coded Diffusers pipelines for production. Nothing flowed cleanly between them.

That's the gap the platform would eventually close.

The proposal

By mid-2023, I had been operating in that pattern — discovered OSS models and techniques, prototype in ComfyUI, hand off to ML teams — long enough to see its limits clearly. Every cycle worked, but every cycle was also a lossy translation. Workflows that ran fine in ComfyUI had to be reimplemented in production-grade Python by ML engineers. Discoveries that took an afternoon in the OSS world took months to ship inside the company. And the prototyping environment and the production environment shared almost no DNA.

The proposal I wrote was simple: what if the same tool we used for discovery, prototyping, and design could also be the tool we used for production?

The case for this was harder to make than it sounds. ComfyUI was, to most ML engineers at the time, a hobbyist tool — a fun way to play with diffusion models, not a serious production substrate. The standard production stack was hand-coded Diffusers pipelines, optimized end-to-end by ML engineers who knew the models deeply. Suggesting that ComfyUI could sit alongside that stack — not replacing it, but offering an additional path from inception to production for certain kinds of workflows — required making the case carefully.

The argument came down to four points:

The OSS community had momentum we couldn't match internally. Hundreds of new extensions appeared each month. New model architectures had ComfyUI support within days of release. The community was effectively doing applied AI R&D at a velocity no single ML team could replicate, and ComfyUI was where most of that velocity landed first.

The node-based architecture was a feature, not a workaround. ComfyUI forced users to understand the underlying components of a generative pipeline — encoders, samplers, conditioning, control mechanisms. That made it a genuinely good prototyping environment for cross-functional teams: designers and researchers and engineers could read the same graph and understand what was happening.

Extensibility meant we could build production-grade infrastructure on top. ComfyUI's extension system let us add the things production needed — proper caching, privacy-aware execution, integration with Snap's existing model serving infrastructure — without forking the tool itself.

The performance concerns were real but engineerable. Yes, running ComfyUI as a production substrate had overhead that hand-coded pipelines didn't. But for the right workflows — especially those that benefited from rapid iteration and OSS community velocity — the tradeoff was worth it. And for cases where it wasn't, ML teams could still take a workflow proven on the platform and reimplement it in their optimized pipelines. The platform wouldn't replace existing production paths; it would add a new one optimized for speed from inception to user.

I put this in a slide deck and pitched it. The proposal got buy-in, and the work began. I designed the dual-path architecture and owned the experimental path. A design engineering partner joined to lead the production path, with dedicated ML engineering support for the integration with Snap's production infrastructure. He ran point on the production side — including the meetings I couldn't always attend from Tokyo — which let me focus on what I did best: the experimental environment where discovery happened, and the platform-wide work of keeping both paths in sync.

That partnership turned out to be one of the most valuable parts of the project. The platform succeeded because it had two committed owners — one of us close to the OSS community and the prototyping work, one of us close to production infrastructure — building toward a shared design.

The dual-path architecture

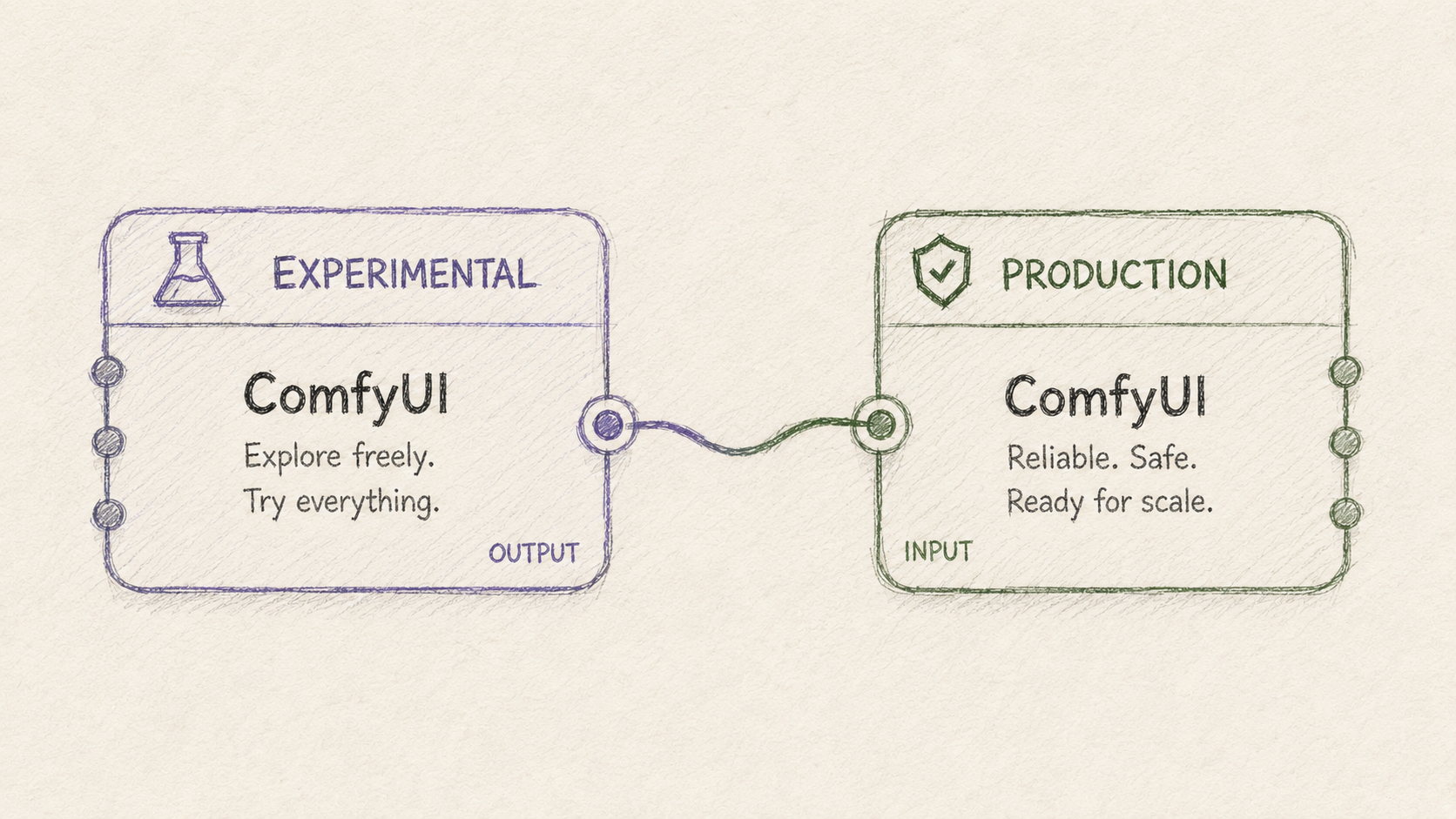

The platform was designed around a single conceptual move: separating exploration from production while keeping them mechanically aligned.

Two ComfyUI deployments ran in parallel, each serving a different purpose, but built from the same underlying image so that workflows could move between them with minimal translation.

The experimental path was open. Anything could be installed: community custom nodes, experimental models, in-development extensions, half-working integrations. I maintained it as the primary user, adding new nodes and capabilities as the OSS community released them and as internal teams requested specific tools. This was where discovery happened — where design engineers, AR creative researchers, the Bitmoji team, ML engineers, safety researchers, and eventually product managers came to try things, prototype features, and learn what was possible. It ran on GCP instance groups, with session caching that mostly kept the same user on the same server through a workflow.

The production path was hardened. Only legally approved nodes and models were installed. Everything had been vetted for licensing, safety, and dependency stability. The production path served as a testable endpoint that exactly matched the real production infrastructure — same Docker image, same configuration — so that a workflow validated here could be expected to behave the same way when deployed to actual production traffic.

Behind the production path sat the real production infrastructure: a custom ComfyUI wrapper, built on top of Snap's existing ML model serving infrastructure, that ran workflows headless without the UI. This wrapper had its own caching implementation — critical for keeping models in memory across requests while expelling user-generated content after execution, which mattered for privacy compliance. The production path eventually moved from GCP instance groups to Kubernetes, around a year before I left.

The promotion pathway between experimental and production was the conceptual core of the whole design. A workflow could start as an exploration on the experimental path. If it proved valuable, we'd identify the specific nodes and models it depended on, run those through legal review, and add them to the production path. Once the workflow ran cleanly on production, it could be deployed to real users. The platform didn't just provide two environments — it provided a journey from inception to production with consistent tooling at every stage.

Beyond the two paths themselves, several pieces of platform infrastructure made the system actually usable:

- A custom ComfyUI shell UI that wrapped the standard ComfyUI interface with features the team needed: workflow management, simplified input/output views for teams who didn't want the full node graph complexity, and high-level abstractions for common operations. Many of these features were eventually built into ComfyUI itself by the upstream team, at which point we removed our versions.

- Lens Studio integration through specialized project templates, letting design engineers and lens creators move from a ComfyUI workflow to a deployable Snapchat lens through a structured pipeline.

- Deployment tools for the AR Creative Research team that let them upload workflows and use the production path to generate large batches of test images with various prompts and inputs, which could then be safety-screened and considered for lens deployment.

- Specialized resources for the Bitmoji team, including dedicated server allocations, custom extensions, and integration with their existing creative tools (notably community-built nodes that connected ComfyUI to local Blender instances, letting them model basic scenes and bring them to life with generative AI).

The custom node work was substantial. Across the three years, I built hundreds of custom nodes for the platform — production-integration nodes for headless execution with binary inputs and outputs (since the UI's caching behavior wasn't appropriate for production), wrappers around LLM and VLM models from various providers, Firebase integration nodes for image, audio, text, and video assets, utility nodes that filled gaps in the community ecosystem, and stable replacements for community nodes that weren't reliable enough for our use cases. Many of these nodes were eventually consumed by Snap Productions, the AI video creation platform I built on top of this infrastructure, where the architecture expanded significantly to support new requirements. (See the Snap Productions case study for that next-generation work.)

By the time I left, the platform had been running for about three years, served roughly ten teams at varying levels of intensity, and had become the primary discovery and prototyping environment for generative AI work at Snap.

What it enabled

The platform's value showed up most clearly in the specific things it made possible. Five examples span the range:

Shipped Snapchat lens features. Several Snapchat lenses ran on workflows built and tested on the platform. The AR Creative Research team, in particular, adopted ComfyUI as a primary tool for prototyping new lens concepts. They'd build workflows in the experimental environment, generate large batches of test images using their prompts and various input photos, get those screened for safety, and — for workflows that passed — deploy through specialized Lens Studio templates I'd built for integrating ComfyUI workflows into the lens pipeline. The platform changed how that team worked: rather than waiting for ML teams to build a capability, they could explore and produce concepts directly, with my team handling the deployment infrastructure underneath.

Training data generation for distilled real-time models. This was one of the platform's most valuable use cases, and one that wouldn't have happened without it. Snap's ML research team had been developing distilled stable diffusion models — small, fast, single-purpose models designed for real-time inference and capable of on-device deployment. Each distilled model needed a large, carefully-varied training dataset of input-output pairs from a specific source workflow. The platform was where those datasets got generated.

The pattern worked like this: an ML engineer or design engineer would build a ComfyUI workflow that did a specific thing well — a particular style transfer, a specific character transformation, a constrained generation with ControlNet conditioning. The workflow could be anything that worked with a consistent input (a face, a person in roughly the same position) and produced the desired effect. Then the platform would generate thousands of variations: different prompts, different reference images, different conditioning inputs, producing a training set that captured the full behavior space of the source workflow. The distillation team would use that data to train a tiny model that ran the same effect in real time.

We even opened a beta version of this capability to external creative makers and lens creators — they could build workflows in our version of ComfyUI and generate training data for their own specialized models. This was a meaningful expansion: not just internal teams using the platform, but external creators participating in the same infrastructure.

The ML engineering work of designing the distillation pipelines and optimizing the resulting models was substantial in its own right. The platform's contribution was making the data side of that effort tractable — producing the volume and variation of training data that distillation required, without each distillation project becoming a custom data engineering effort.

Bitmoji team workflows. The Bitmoji team became one of the platform's heaviest users, eventually getting dedicated server allocations and custom extensions to support their work. Their workflows ranged across the spectrum: generating clothing concepts for Bitmoji avatars, placing Bitmojis in generated scenes, exploring new visual styles for character rendering. I supported them through a biweekly working session with their primary workflow author — typically one person who'd build a workflow that two or three other team members would then use, varying prompts and inputs to produce specific creative outputs. This pattern repeated across other teams: a small number of platform-fluent users built workflows that scaled to broader team usage.

One distinctive piece of their work was integrating ComfyUI with local Blender instances — community-built nodes let them model a basic 3D scene in Blender and bring it to life with generative AI through ComfyUI. We helped a couple of their heavy users with the setup and the mechanisms to make it work cleanly with our server-hosted ComfyUI instances. It was one of the more creative workflows on the platform and the kind of thing that's hard to plan for in advance — the platform succeeded because it could absorb unexpected use cases like this when they emerged.

Early Easy Lens prototyping. Easy Lens — now Snap's standalone app that replaced the mobile edition of Lens Studio — used the platform during its early prototyping phase. The early versions of that system used ComfyUI workflows orchestrated by AI agents to generate lens concepts from user prompts. The platform served as the foundation that let that team prototype the concept quickly and prove the agent-driven approach worked before the product matured into its current form. It's a useful illustration of the platform's intended arc: accelerate early-stage work, then let products graduate to their own dedicated architectures when scale or specialization demands it.

Fine-tuning Qwen Image Edit on Bitmoji data. This was a smaller piece of work that captures the economics dimension of what the platform enabled. In late 2025, the broader Snap ecosystem had successful experiences built on Nano Banana (Gemini's image editing capability), but the API costs constrained how aggressively those experiences could grow. Looking for cheaper alternatives that could handle Bitmoji-specific use cases, I trained a custom LoRA on Qwen Image Edit using a dataset I generated and curated entirely through the platform: Bitmoji avatars in various scenes, wearing specific clothes, holding referenced objects, with multiple characters in single images.

The training data could only come from the kind of varied generation work the platform made easy — thousands of carefully-conditioned Bitmoji images, generated through ComfyUI workflows that combined multiple Bitmoji reference inputs into single composed scenes. The resulting model handled multi-character composition, clothing reference transfer, and identity preservation for Bitmoji-specific use cases. The work demonstrated the platform's value end-to-end: it made the training data generation tractable, and it produced a working model at meaningfully lower inference cost than the API-based alternatives.

This kind of work — exploring whether a cheaper internal model could replace an expensive external API for a specific use case — is the design engineering pattern in microcosm. The job is to prototype the path and prove the approach works. Production execution and final shipping decisions belong to others.

What I learned

Three years of operating this platform taught me things about how applied AI tooling actually succeeds inside organizations — lessons that I think generalize beyond the specifics of ComfyUI or Snap.

The most valuable AI platforms bridge communities that don't naturally talk to each other. The disconnect between ML research/engineering and the OSS creative AI community wasn't unique to Snap. Most large companies have a version of this divide, and it gets larger as the OSS ecosystem accelerates. The companies that ship AI features fastest will be the ones that close this gap structurally — by building platforms where research velocity, production rigor, and creative experimentation can coexist on shared infrastructure. The technical work is real, but the harder work is organizational: convincing both communities that the other has something to offer, and building tooling that respects how each one actually works.

Designing for multiple personas is harder than designing for one, and it's the whole point. The platform served design engineers, ML researchers, AR creative researchers, the Bitmoji team, the safety team, and eventually PMs. Each had different needs, different fluency levels, different mental models. A platform that served only one of these audiences would have been simpler to build but would have had a fraction of the impact. The interface decisions — the simplified UI shell, the workflow templates, the integration points with Lens Studio, the team-specific extensions — were all about making the same underlying infrastructure usable by people who didn't share a single way of working. That kind of design isn't optional in a real platform; it's the work.

Open-source momentum is engineering velocity. The reason the platform stayed valuable for three years is that ComfyUI itself kept getting better, and the surrounding ecosystem kept producing capabilities the platform could absorb. Every new model release, every new technique, every new community node was something the platform could potentially adopt — sometimes within days. Trying to build all of that internally would have meant constantly falling behind. Building on top of a thriving OSS substrate meant the platform's capabilities grew without the platform team having to grow proportionally. That tradeoff — accepting some dependency on the OSS community in exchange for absorbing its velocity — was the single most important architectural bet, and it paid off.

The "prototype and hand off" pattern is a force multiplier when the platform supports it. My job wasn't to ship every feature myself. It was to prove approaches worked, build the workflows that demonstrated them, and hand off to teams who could own the production execution. The platform made this pattern tractable — handoff is much easier when both sides are working with the same tooling, the same workflows, and the same underlying infrastructure. Without the platform, every handoff involved translation. With it, the handoff was closer to a clean transfer of artifacts that already worked.

Platforms succeed when they enable graduation, not when they prevent it. This is the lesson I came to most slowly. Early on, I assumed the success metric was "more workflows running on the platform forever." What I came to understand is that the right metric is closer to "more things that started on the platform and grew into something dedicated when they needed to." Easy Lens graduating from ComfyUI workflows into its own standalone app is the pattern working correctly. Snap Productions outgrowing the original infrastructure and prototyping its own next-generation architecture is the same pattern. A platform that accelerates the early stages and then steps out of the way when something has earned dedicated infrastructure is doing its job. A platform that tries to hold onto everything it ever served becomes a constraint.

The two-communities dynamic is evolving, and that's worth understanding. When the platform started in 2023, the gap between ML researchers and the OSS creative AI community was real and large. By 2026, that gap has narrowed considerably. Most major OSS model releases now include ComfyUI support out of the box, or have community-built support within days. AI terminology has bled into general engineering conversation. Researchers at companies like Snap now know what ComfyUI is, even if they don't use it daily. The tent for people interacting and working with ML models has gotten much bigger, and the boundaries between research, engineering, design, and creative practice are softer than they were three years ago. I expect this trend to continue — and I expect the next generation of AI platforms will be designed for a world where that integration is the starting point, not the destination.

From innovation to production with ComfyUI

May 17, 2026

A cross-platform AI video creation tool for storytelling with persistent characters who can talk.

Role: Design Engineer (originating designer and primary experimental-path operator) — or refined variants we discussed: "Proposer, architect, and primary experimental-path operator" or "Originator, architect, primary operator (experimental path)"

Timeframe: 2023–2026 (3 years)

Scale: ~10 teams at peak, hundreds of custom nodes, multiple shipped Snapchat lens features

Tech: ComfyUI, GCP, Kubernetes, Docker, Python, Pytorch, Diffusers, Firebase, custom ComfyUI wrapper for production deployment

TL;DR

- Proposed and built Snap's internal ComfyUI platform with a dual-path architecture (experimental + production-approved) that bridged the gap between the open-source generative AI community and Snap's ML engineering, enabling the same tool to serve discovery, prototyping, and production deployment.

- Platform served roughly ten teams across three years, including Design Engineering, AR Creative Research, the Bitmoji team, ML researchers, and safety researchers — powering shipped Snapchat lens features, training data generation for distilled real-time models, and the early prototyping phase of products like Easy Lens (now Snap's standalone lens creation app).

- Built and maintained hundreds of custom nodes, custom production infrastructure with privacy-aware caching, team-specific tooling and templates, and the foundation that supported Snap Productions — a multi-clip AI video creation suite whose architecture extended this platform's design.

The disconnect

When generative AI took off with Stable Diffusion and ChatGPT in late 2022, two communities formed around it that barely talked to each other.

On one side were ML researchers and engineers — people who read the papers, understood the underlying architectures, and built the production systems that put models in front of users. On the other side was the open-source creative AI community: artists, designers, technologists, and curious newcomers who'd downloaded Automatic1111 or ComfyUI and were stitching together capabilities through extensions, custom nodes, and shared workflows. The OSS community moved fast — sometimes faster than the research-to-product pipelines inside large companies — because it was unconstrained by infrastructure decisions, model licensing concerns, or production engineering timelines.

At Snap, these two worlds existed inside the same building but rarely intersected. Most ML researchers and engineers I worked with had heard little of A1111 or ComfyUI. Meanwhile, a few of us on the design engineering side were using these tools daily — discovering capabilities through community workflows, building prototypes that demonstrated what was possible, and trying to translate those discoveries back to ML teams in a form they could productionize.

The result was three persistent problems:

- ML teams discovered new capabilities slowly. Techniques that were already commonplace in the OSS community took months to surface inside research and production teams.

- Productionizing a feature took a long time. Every workflow had to be reimplemented from scratch in optimized form by ML engineers, even when a working version already existed in ComfyUI.

- What the community could already do, we often couldn't. The OSS world had functional solutions for things like multi-character generation, controllable composition, and identity preservation — but those didn't reach Snap's products until ML teams could build their own versions.

A concrete example: a project we called Dreams.

Dreams was a feature where we'd generate creative images of users in different scenes. The initial implementation fine-tuned a complete Stable Diffusion model per user — expensive and slow. While that approach was the production path, I was experimenting with newer techniques from the OSS community. I trained dozens of smaller LoRA adapters using fewer images, faster and cheaper. For a stretch in 2023, I might have been the person at Snap with the most hands-on LoRA training experience, almost entirely because the OSS community had figured out how to do it and ML teams were focused elsewhere.

Then IP-Adapter emerged from the OSS world. I built workflows and prototypes with it, shared them with ML teams, and the company eventually moved away from expensive fine-tuning toward IP-Adapter for identity preservation.

But Dreams was for Snapchat — a product fundamentally about friends. So the natural next question was: how do you generate images with you and your friends? ML teams didn't have an answer. The OSS community, working through attention masks and segmentation models like Segment Anything (SAM), already did. I built ComfyUI workflows combining IP-Adapter, attention masks, and SAM-based segmentation for multi-character generation, shared them with ML engineers, and they integrated the techniques into their Diffusers pipelines for production.

This pattern — design engineers discovering capabilities in OSS, prototyping in ComfyUI, hand-carrying the insights to ML teams who then reimplemented them in production form — worked, but slowly.

Every cycle took weeks or months. Worse, the prototyping environment and the production environment were entirely different stacks: the OSS world for discovery, hand-coded Diffusers pipelines for production. Nothing flowed cleanly between them.

That's the gap the platform would eventually close.

The proposal

By mid-2023, I had been operating in that pattern — discovered OSS models and techniques, prototype in ComfyUI, hand off to ML teams — long enough to see its limits clearly. Every cycle worked, but every cycle was also a lossy translation. Workflows that ran fine in ComfyUI had to be reimplemented in production-grade Python by ML engineers. Discoveries that took an afternoon in the OSS world took months to ship inside the company. And the prototyping environment and the production environment shared almost no DNA.

The proposal I wrote was simple: what if the same tool we used for discovery, prototyping, and design could also be the tool we used for production?

The case for this was harder to make than it sounds. ComfyUI was, to most ML engineers at the time, a hobbyist tool — a fun way to play with diffusion models, not a serious production substrate. The standard production stack was hand-coded Diffusers pipelines, optimized end-to-end by ML engineers who knew the models deeply. Suggesting that ComfyUI could sit alongside that stack — not replacing it, but offering an additional path from inception to production for certain kinds of workflows — required making the case carefully.

The argument came down to four points:

The OSS community had momentum we couldn't match internally. Hundreds of new extensions appeared each month. New model architectures had ComfyUI support within days of release. The community was effectively doing applied AI R&D at a velocity no single ML team could replicate, and ComfyUI was where most of that velocity landed first.

The node-based architecture was a feature, not a workaround. ComfyUI forced users to understand the underlying components of a generative pipeline — encoders, samplers, conditioning, control mechanisms. That made it a genuinely good prototyping environment for cross-functional teams: designers and researchers and engineers could read the same graph and understand what was happening.

Extensibility meant we could build production-grade infrastructure on top. ComfyUI's extension system let us add the things production needed — proper caching, privacy-aware execution, integration with Snap's existing model serving infrastructure — without forking the tool itself.

The performance concerns were real but engineerable. Yes, running ComfyUI as a production substrate had overhead that hand-coded pipelines didn't. But for the right workflows — especially those that benefited from rapid iteration and OSS community velocity — the tradeoff was worth it. And for cases where it wasn't, ML teams could still take a workflow proven on the platform and reimplement it in their optimized pipelines. The platform wouldn't replace existing production paths; it would add a new one optimized for speed from inception to user.

I put this in a slide deck and pitched it. The proposal got buy-in, and the work began. I designed the dual-path architecture and owned the experimental path. A design engineering partner joined to lead the production path, with dedicated ML engineering support for the integration with Snap's production infrastructure. He ran point on the production side — including the meetings I couldn't always attend from Tokyo — which let me focus on what I did best: the experimental environment where discovery happened, and the platform-wide work of keeping both paths in sync.

That partnership turned out to be one of the most valuable parts of the project. The platform succeeded because it had two committed owners — one of us close to the OSS community and the prototyping work, one of us close to production infrastructure — building toward a shared design.

The dual-path architecture

The platform was designed around a single conceptual move: separating exploration from production while keeping them mechanically aligned.

Two ComfyUI deployments ran in parallel, each serving a different purpose, but built from the same underlying image so that workflows could move between them with minimal translation.

The experimental path was open. Anything could be installed: community custom nodes, experimental models, in-development extensions, half-working integrations. I maintained it as the primary user, adding new nodes and capabilities as the OSS community released them and as internal teams requested specific tools. This was where discovery happened — where design engineers, AR creative researchers, the Bitmoji team, ML engineers, safety researchers, and eventually product managers came to try things, prototype features, and learn what was possible. It ran on GCP instance groups, with session caching that mostly kept the same user on the same server through a workflow.

The production path was hardened. Only legally approved nodes and models were installed. Everything had been vetted for licensing, safety, and dependency stability. The production path served as a testable endpoint that exactly matched the real production infrastructure — same Docker image, same configuration — so that a workflow validated here could be expected to behave the same way when deployed to actual production traffic.

Behind the production path sat the real production infrastructure: a custom ComfyUI wrapper, built on top of Snap's existing ML model serving infrastructure, that ran workflows headless without the UI. This wrapper had its own caching implementation — critical for keeping models in memory across requests while expelling user-generated content after execution, which mattered for privacy compliance. The production path eventually moved from GCP instance groups to Kubernetes, around a year before I left.

The promotion pathway between experimental and production was the conceptual core of the whole design. A workflow could start as an exploration on the experimental path. If it proved valuable, we'd identify the specific nodes and models it depended on, run those through legal review, and add them to the production path. Once the workflow ran cleanly on production, it could be deployed to real users. The platform didn't just provide two environments — it provided a journey from inception to production with consistent tooling at every stage.

Beyond the two paths themselves, several pieces of platform infrastructure made the system actually usable:

- A custom ComfyUI shell UI that wrapped the standard ComfyUI interface with features the team needed: workflow management, simplified input/output views for teams who didn't want the full node graph complexity, and high-level abstractions for common operations. Many of these features were eventually built into ComfyUI itself by the upstream team, at which point we removed our versions.

- Lens Studio integration through specialized project templates, letting design engineers and lens creators move from a ComfyUI workflow to a deployable Snapchat lens through a structured pipeline.

- Deployment tools for the AR Creative Research team that let them upload workflows and use the production path to generate large batches of test images with various prompts and inputs, which could then be safety-screened and considered for lens deployment.

- Specialized resources for the Bitmoji team, including dedicated server allocations, custom extensions, and integration with their existing creative tools (notably community-built nodes that connected ComfyUI to local Blender instances, letting them model basic scenes and bring them to life with generative AI).

The custom node work was substantial. Across the three years, I built hundreds of custom nodes for the platform — production-integration nodes for headless execution with binary inputs and outputs (since the UI's caching behavior wasn't appropriate for production), wrappers around LLM and VLM models from various providers, Firebase integration nodes for image, audio, text, and video assets, utility nodes that filled gaps in the community ecosystem, and stable replacements for community nodes that weren't reliable enough for our use cases. Many of these nodes were eventually consumed by Snap Productions, the AI video creation platform I built on top of this infrastructure, where the architecture expanded significantly to support new requirements. (See the Snap Productions case study for that next-generation work.)

By the time I left, the platform had been running for about three years, served roughly ten teams at varying levels of intensity, and had become the primary discovery and prototyping environment for generative AI work at Snap.

What it enabled

The platform's value showed up most clearly in the specific things it made possible. Five examples span the range:

Shipped Snapchat lens features. Several Snapchat lenses ran on workflows built and tested on the platform. The AR Creative Research team, in particular, adopted ComfyUI as a primary tool for prototyping new lens concepts. They'd build workflows in the experimental environment, generate large batches of test images using their prompts and various input photos, get those screened for safety, and — for workflows that passed — deploy through specialized Lens Studio templates I'd built for integrating ComfyUI workflows into the lens pipeline. The platform changed how that team worked: rather than waiting for ML teams to build a capability, they could explore and produce concepts directly, with my team handling the deployment infrastructure underneath.

Training data generation for distilled real-time models. This was one of the platform's most valuable use cases, and one that wouldn't have happened without it. Snap's ML research team had been developing distilled stable diffusion models — small, fast, single-purpose models designed for real-time inference and capable of on-device deployment. Each distilled model needed a large, carefully-varied training dataset of input-output pairs from a specific source workflow. The platform was where those datasets got generated.

The pattern worked like this: an ML engineer or design engineer would build a ComfyUI workflow that did a specific thing well — a particular style transfer, a specific character transformation, a constrained generation with ControlNet conditioning. The workflow could be anything that worked with a consistent input (a face, a person in roughly the same position) and produced the desired effect. Then the platform would generate thousands of variations: different prompts, different reference images, different conditioning inputs, producing a training set that captured the full behavior space of the source workflow. The distillation team would use that data to train a tiny model that ran the same effect in real time.

We even opened a beta version of this capability to external creative makers and lens creators — they could build workflows in our version of ComfyUI and generate training data for their own specialized models. This was a meaningful expansion: not just internal teams using the platform, but external creators participating in the same infrastructure.

The ML engineering work of designing the distillation pipelines and optimizing the resulting models was substantial in its own right. The platform's contribution was making the data side of that effort tractable — producing the volume and variation of training data that distillation required, without each distillation project becoming a custom data engineering effort.

Bitmoji team workflows. The Bitmoji team became one of the platform's heaviest users, eventually getting dedicated server allocations and custom extensions to support their work. Their workflows ranged across the spectrum: generating clothing concepts for Bitmoji avatars, placing Bitmojis in generated scenes, exploring new visual styles for character rendering. I supported them through a biweekly working session with their primary workflow author — typically one person who'd build a workflow that two or three other team members would then use, varying prompts and inputs to produce specific creative outputs. This pattern repeated across other teams: a small number of platform-fluent users built workflows that scaled to broader team usage.

One distinctive piece of their work was integrating ComfyUI with local Blender instances — community-built nodes let them model a basic 3D scene in Blender and bring it to life with generative AI through ComfyUI. We helped a couple of their heavy users with the setup and the mechanisms to make it work cleanly with our server-hosted ComfyUI instances. It was one of the more creative workflows on the platform and the kind of thing that's hard to plan for in advance — the platform succeeded because it could absorb unexpected use cases like this when they emerged.

Early Easy Lens prototyping. Easy Lens — now Snap's standalone app that replaced the mobile edition of Lens Studio — used the platform during its early prototyping phase. The early versions of that system used ComfyUI workflows orchestrated by AI agents to generate lens concepts from user prompts. The platform served as the foundation that let that team prototype the concept quickly and prove the agent-driven approach worked before the product matured into its current form. It's a useful illustration of the platform's intended arc: accelerate early-stage work, then let products graduate to their own dedicated architectures when scale or specialization demands it.

Fine-tuning Qwen Image Edit on Bitmoji data. This was a smaller piece of work that captures the economics dimension of what the platform enabled. In late 2025, the broader Snap ecosystem had successful experiences built on Nano Banana (Gemini's image editing capability), but the API costs constrained how aggressively those experiences could grow. Looking for cheaper alternatives that could handle Bitmoji-specific use cases, I trained a custom LoRA on Qwen Image Edit using a dataset I generated and curated entirely through the platform: Bitmoji avatars in various scenes, wearing specific clothes, holding referenced objects, with multiple characters in single images.

The training data could only come from the kind of varied generation work the platform made easy — thousands of carefully-conditioned Bitmoji images, generated through ComfyUI workflows that combined multiple Bitmoji reference inputs into single composed scenes. The resulting model handled multi-character composition, clothing reference transfer, and identity preservation for Bitmoji-specific use cases. The work demonstrated the platform's value end-to-end: it made the training data generation tractable, and it produced a working model at meaningfully lower inference cost than the API-based alternatives.

This kind of work — exploring whether a cheaper internal model could replace an expensive external API for a specific use case — is the design engineering pattern in microcosm. The job is to prototype the path and prove the approach works. Production execution and final shipping decisions belong to others.

What I learned

Three years of operating this platform taught me things about how applied AI tooling actually succeeds inside organizations — lessons that I think generalize beyond the specifics of ComfyUI or Snap.

The most valuable AI platforms bridge communities that don't naturally talk to each other. The disconnect between ML research/engineering and the OSS creative AI community wasn't unique to Snap. Most large companies have a version of this divide, and it gets larger as the OSS ecosystem accelerates. The companies that ship AI features fastest will be the ones that close this gap structurally — by building platforms where research velocity, production rigor, and creative experimentation can coexist on shared infrastructure. The technical work is real, but the harder work is organizational: convincing both communities that the other has something to offer, and building tooling that respects how each one actually works.

Designing for multiple personas is harder than designing for one, and it's the whole point. The platform served design engineers, ML researchers, AR creative researchers, the Bitmoji team, the safety team, and eventually PMs. Each had different needs, different fluency levels, different mental models. A platform that served only one of these audiences would have been simpler to build but would have had a fraction of the impact. The interface decisions — the simplified UI shell, the workflow templates, the integration points with Lens Studio, the team-specific extensions — were all about making the same underlying infrastructure usable by people who didn't share a single way of working. That kind of design isn't optional in a real platform; it's the work.

Open-source momentum is engineering velocity. The reason the platform stayed valuable for three years is that ComfyUI itself kept getting better, and the surrounding ecosystem kept producing capabilities the platform could absorb. Every new model release, every new technique, every new community node was something the platform could potentially adopt — sometimes within days. Trying to build all of that internally would have meant constantly falling behind. Building on top of a thriving OSS substrate meant the platform's capabilities grew without the platform team having to grow proportionally. That tradeoff — accepting some dependency on the OSS community in exchange for absorbing its velocity — was the single most important architectural bet, and it paid off.

The "prototype and hand off" pattern is a force multiplier when the platform supports it. My job wasn't to ship every feature myself. It was to prove approaches worked, build the workflows that demonstrated them, and hand off to teams who could own the production execution. The platform made this pattern tractable — handoff is much easier when both sides are working with the same tooling, the same workflows, and the same underlying infrastructure. Without the platform, every handoff involved translation. With it, the handoff was closer to a clean transfer of artifacts that already worked.

Platforms succeed when they enable graduation, not when they prevent it. This is the lesson I came to most slowly. Early on, I assumed the success metric was "more workflows running on the platform forever." What I came to understand is that the right metric is closer to "more things that started on the platform and grew into something dedicated when they needed to." Easy Lens graduating from ComfyUI workflows into its own standalone app is the pattern working correctly. Snap Productions outgrowing the original infrastructure and prototyping its own next-generation architecture is the same pattern. A platform that accelerates the early stages and then steps out of the way when something has earned dedicated infrastructure is doing its job. A platform that tries to hold onto everything it ever served becomes a constraint.

The two-communities dynamic is evolving, and that's worth understanding. When the platform started in 2023, the gap between ML researchers and the OSS creative AI community was real and large. By 2026, that gap has narrowed considerably. Most major OSS model releases now include ComfyUI support out of the box, or have community-built support within days. AI terminology has bled into general engineering conversation. Researchers at companies like Snap now know what ComfyUI is, even if they don't use it daily. The tent for people interacting and working with ML models has gotten much bigger, and the boundaries between research, engineering, design, and creative practice are softer than they were three years ago. I expect this trend to continue — and I expect the next generation of AI platforms will be designed for a world where that integration is the starting point, not the destination.