An Agentic iOS App Creation System

May 17, 2026

A cross-platform AI video creation tool for storytelling with persistent characters who can talk.

Role: Design Engineer (proposer, architect, sole builder)

Timeframe: Early 2026 (approximately three weeks for the MVP, plus preceding deployment service infrastructure work)

Tech: Swift, SwiftUI, Claude Code, Claude Agent SDK (ported to Swift), MCP, Firebase (Realtime Database + Firestore), Tuist, Swift Package Manager, Go (deployment service)

TL;DR

- Built a working agentic iOS app creation system at Snap as a Design Engineer — users could chat with an agent from their phone to build, iterate on, and deploy real iOS app prototypes, then download builds directly to their device.

- Ported the Claude Agent SDK from TypeScript to Swift through agent-assisted dependency-graph analysis and milestone-tested execution, enabling the entire system to be built natively in Swift across iOS, macOS, and a developer-side menubar daemon.

- Architectural choices made the system tractable where similar approaches typically fail: keeping Claude Code execution local to each developer's machine, using Tuist instead of Xcode project files so the agent could manage modular Swift architecture, and building on an existing internal prototype deployment service I'd previously extended to support automatic updates.

The infrastructure that came first

This project started a few months earlier than it looks like it did.

Design engineering at Snap had its own tools for deploying prototypes — a service that produced QR-coded download links you could share with specific people. Useful, but limited. The links were undiscoverable to anyone you didn't share them with; the system occasionally broke; the website was basic. Originally built in Go by a design engineer who had since left, it stored build metadata in Firebase, organized by UUID.

I'd been using this service for the storytelling video editor (the iOS app described in the previous case study), and when an internal team requested a macOS version, I built one. Same SwiftUI codebase, different target. But this surfaced a follow-on problem: how would those users get updates? Bug fixes, model improvements, the inevitable churn of a tool under active development — none of it would reach them without an update mechanism.

So I extended the deployment service. The work that resulted ended up being more substantial than I'd initially scoped:

- Reorganized the server to organize builds by project rather than just UUID, with creators, versions, and per-version metadata

- Added macOS and Mac Catalyst support to the builder shell scripts, including the code-signing pipeline for CI

- Auto-extracted app icons during the build process and uploaded them to Firebase as version metadata (cached to avoid duplicates)

- Built a significantly improved web UI on top of the new server endpoints — projects became browsable, version histories visible, release notes supported in Markdown

- Added per-build visibility controls so authors could hide old or broken builds without deleting them

The piece that mattered most for what came next was a Swift Package for automatic updates. Any iOS or macOS app could integrate it; on launch or foreground, it would check the deployment service for newer versions of itself. If a newer version existed, the app could surface a UI for downloading and installing it. A shake gesture would bring up an install menu showing all available versions, including older ones — useful for testing regressions or going back to a known-good build.

Other engineers started integrating the auto-update package into their prototypes. Designers, newly equipped with Claude Code, had started building their own iOS prototypes and adopted the deployment service for sharing them. The improved discoverability of projects and version history made the whole system more useful as something people actually returned to, rather than a place to dump one-off builds.

The constraint

By early 2026, design engineering had identified a need to rapidly prototype standalone app concepts — building and iterating on small iOS apps as proof-of-concept investigations rather than features within Snapchat. The team needed velocity. Lots of prototypes, fast iteration, low ceremony.

Outside Snap, the obvious approach would have been to use one of the new agentic coding platforms — OpenClaw being the most-discussed at the time — tools that let you describe an app and have it built and iterated on through agent-driven coding. Several of these had launched recently and were dramatically compressing the time from idea to working app.

Inside Snap, OpenClaw and similar platforms weren't available for our use. We had access to Claude Code, newly approved as an internal coding tool, but the broader external agentic platforms that ran code in their own environments were off-limits.

This was the gap. The mandate said "ship app prototypes fast." The toolchain available was "Claude Code as a CLI on your laptop." There was real distance between what the team needed and what was available.

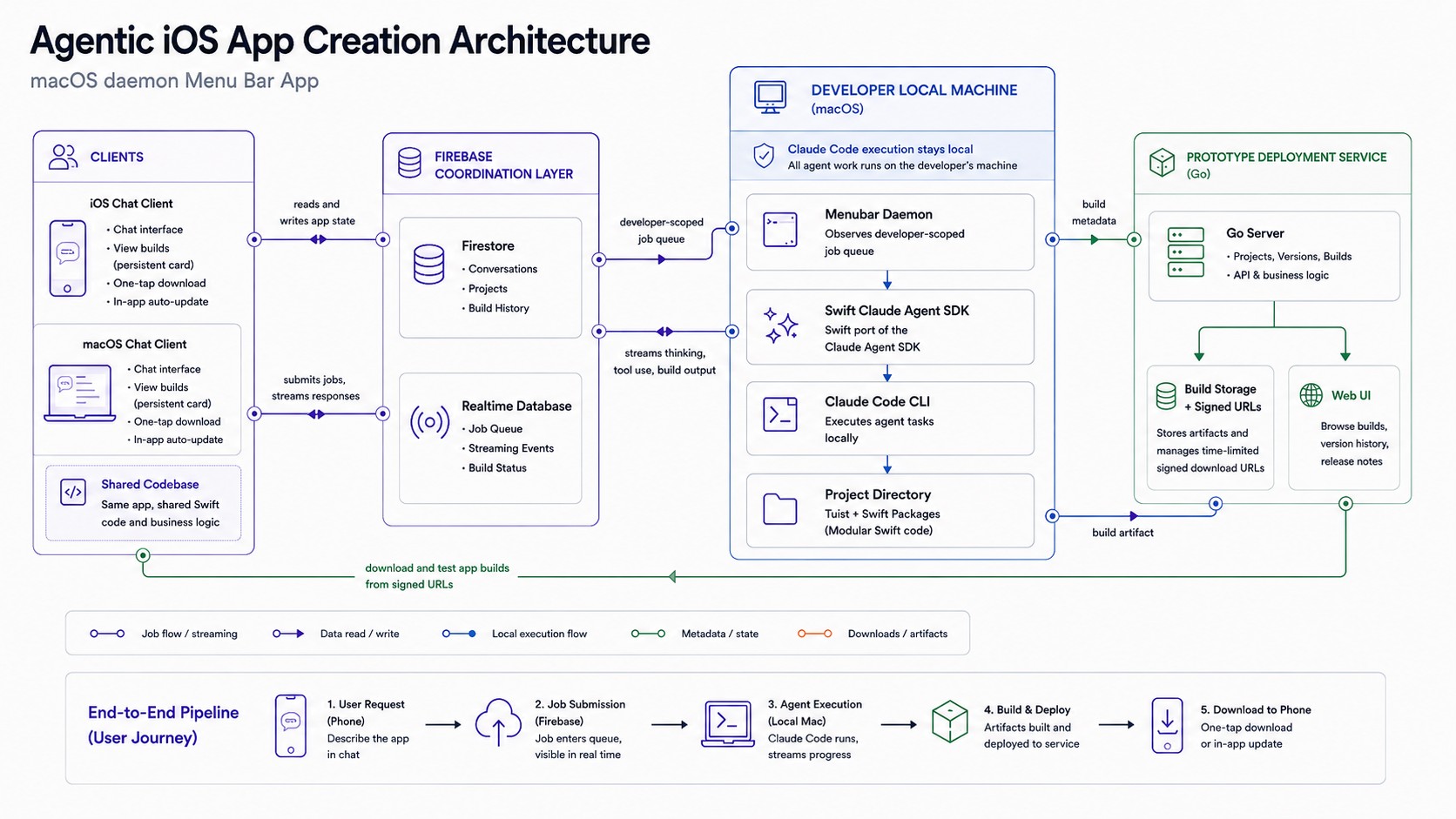

The move was to build the missing piece ourselves. Not by replicating external platforms, but by building something that operated within the available constraints: Claude Code running locally on each developer's laptop, an iOS/macOS chat client driving it, and the prototype deployment service handling builds and distribution. Everything would stay internal. Everything would respect the existing infrastructure boundaries. The agent execution would happen entirely on developer machines, not in any external service.

The premise was simple: chat with an agent from your phone, have it build and ship a real iOS app to that same phone, prototype an app idea from anywhere.

Porting the Claude Agent SDK to Swift

The system needed to be Swift end-to-end. The deployment service was Swift-friendly. The clients had to be SwiftUI. The developer-side daemon had to integrate with Apple platform services. A Node.js bridge would have worked but would have added significant operational complexity for a prototype.

The Claude Agent SDK existed only in TypeScript. So I ported it.

The approach was methodical. I used Claude to analyze the TypeScript SDK as a dependency graph — what code stood on its own, what depended on other modules, what touched the network, what was pure data manipulation. From that analysis came a multi-part porting plan with milestones. Each milestone included tests, and the build had to succeed and tests had to pass before moving to the next milestone. This let the port itself become an agentic project — I'd describe a milestone, Claude would execute it, the tests would validate it, and we'd move on.

The end result was a complete functional port of the SDK to Swift. Because the Swift ecosystem already had an MCP protocol implementation, real MCP integration came along for free — the ported SDK could speak to MCP servers natively without additional translation layers.

The three-part system

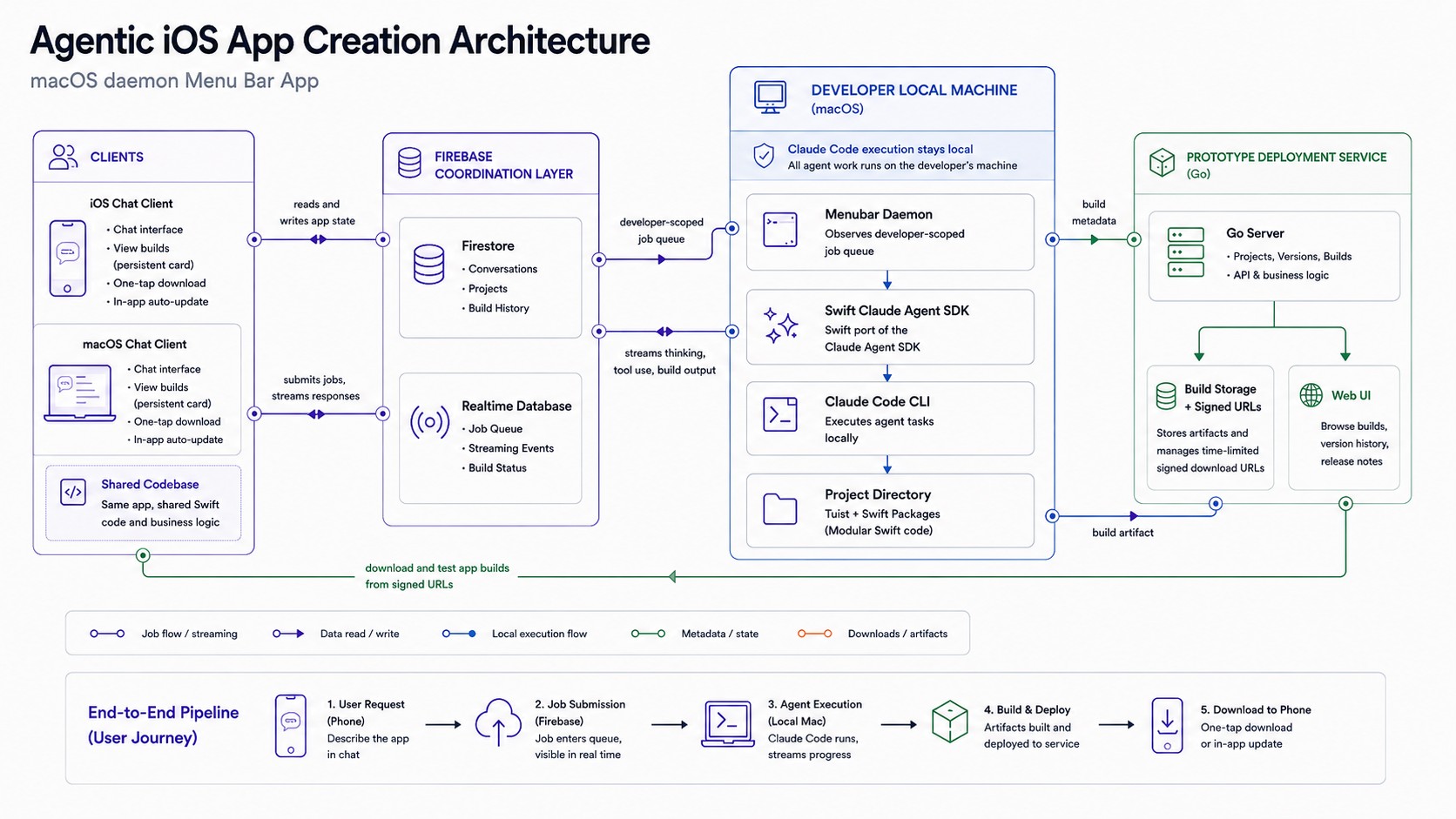

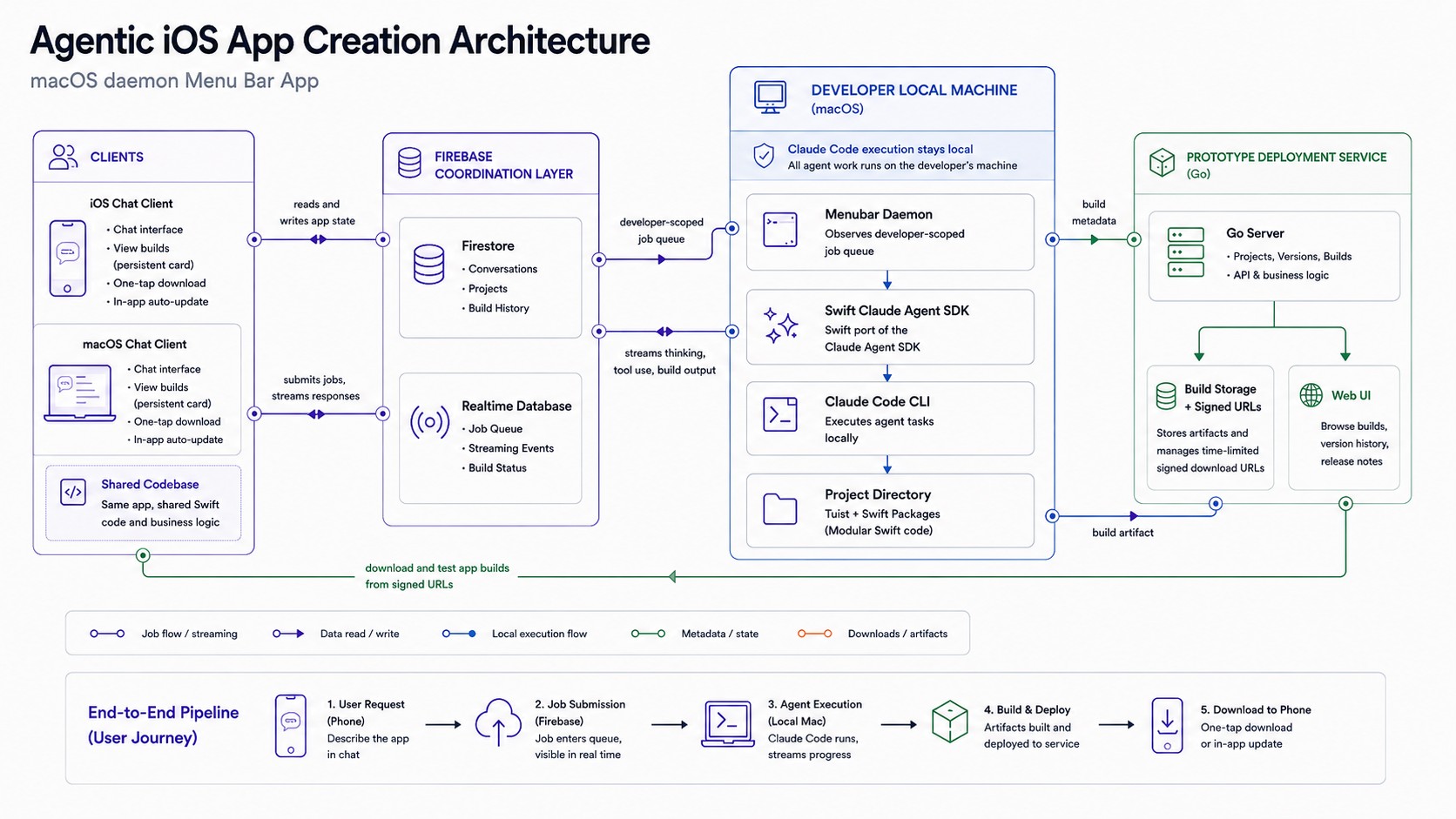

With the Swift Claude Agent SDK in hand, the system had three components.

The developer-side menubar daemon. A macOS menubar application that ran on each developer's laptop. This was the only component that had the Swift Claude Agent SDK and could control Claude Code. It observed a job queue in Firebase scoped to that specific developer — when a user submitted a request from their phone, the job arrived here. The daemon launched Claude with the appropriate project context, streamed responses back as they were generated, and managed the lifecycle of the build-and-deploy pipeline.

The daemon used Claude Code's streaming APIs end-to-end. Thinking events, tool use events, file edits, build output — everything flowed through Firebase realtime database as it happened, so the user could watch the agent work from their phone in real time. Tool approval modes mirrored Claude Code's own settings; you could configure how much autonomy the agent had per-project or per-conversation.

The iOS/macOS chat client. A SwiftUI app, primarily designed for phone usage but fully functional on Mac. The interface looked like a modern AI chat — message history, streaming responses, expandable thinking blocks, expandable tool-use visualizations, Markdown rendering. Users could create new app projects, switch between active projects, browse the full prototype library, and request iterations on existing builds.

The persistent UI affordance that tied everything together: at the top of every chat, the app being worked on was visible — its icon, its name, and an expandable disclosure that surfaced the current build status, version history, and a one-tap download for the latest build. After the first successful build of a new app, this card became how you knew the system was actually doing the thing. The agent finished. The build deployed. The icon appeared at the top of your chat. You tapped download. The app installed on your phone. You opened it.

The persistence mattered because you could work on multiple apps at once. Each conversation belonged to its own app project; the card at the top kept you oriented. The app icons that the deployment service had been extracting and caching for months were finally visible in the UI they'd been built for.

The deployment service extension. Beyond the existing work, two pieces specifically supported the agentic system:

The Swift Package for automatic updates became how iterations propagated. When the agent shipped a new build, the user already had the previous version installed; the auto-update package detected the new version and offered to install it. The "rapid iteration" loop — request a change, watch the agent work, install the new build, test it, request another change — depended entirely on this automatic-update path.

The project structure was templated. When the agent created a new app, it didn't generate an Xcode project from scratch — it started from a template directory with the appropriate Tuist configuration, initial Swift Package structure, deployment service integration, and Git initialization already in place. The agent's job was filling in the app's actual functionality, not figuring out Apple's tooling.

What it felt like to use

Using the MVP was, in some sense, the case study in compressed form.

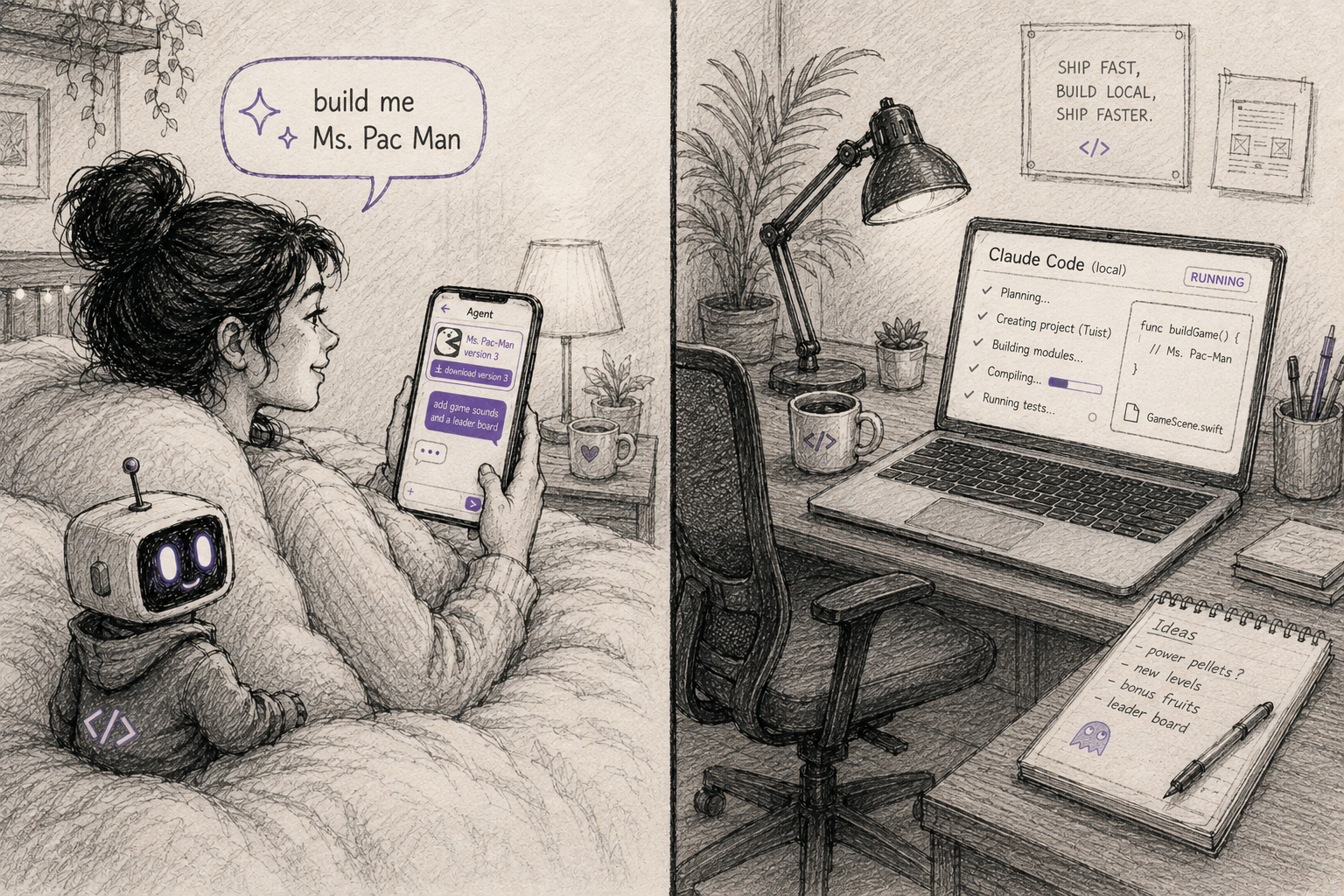

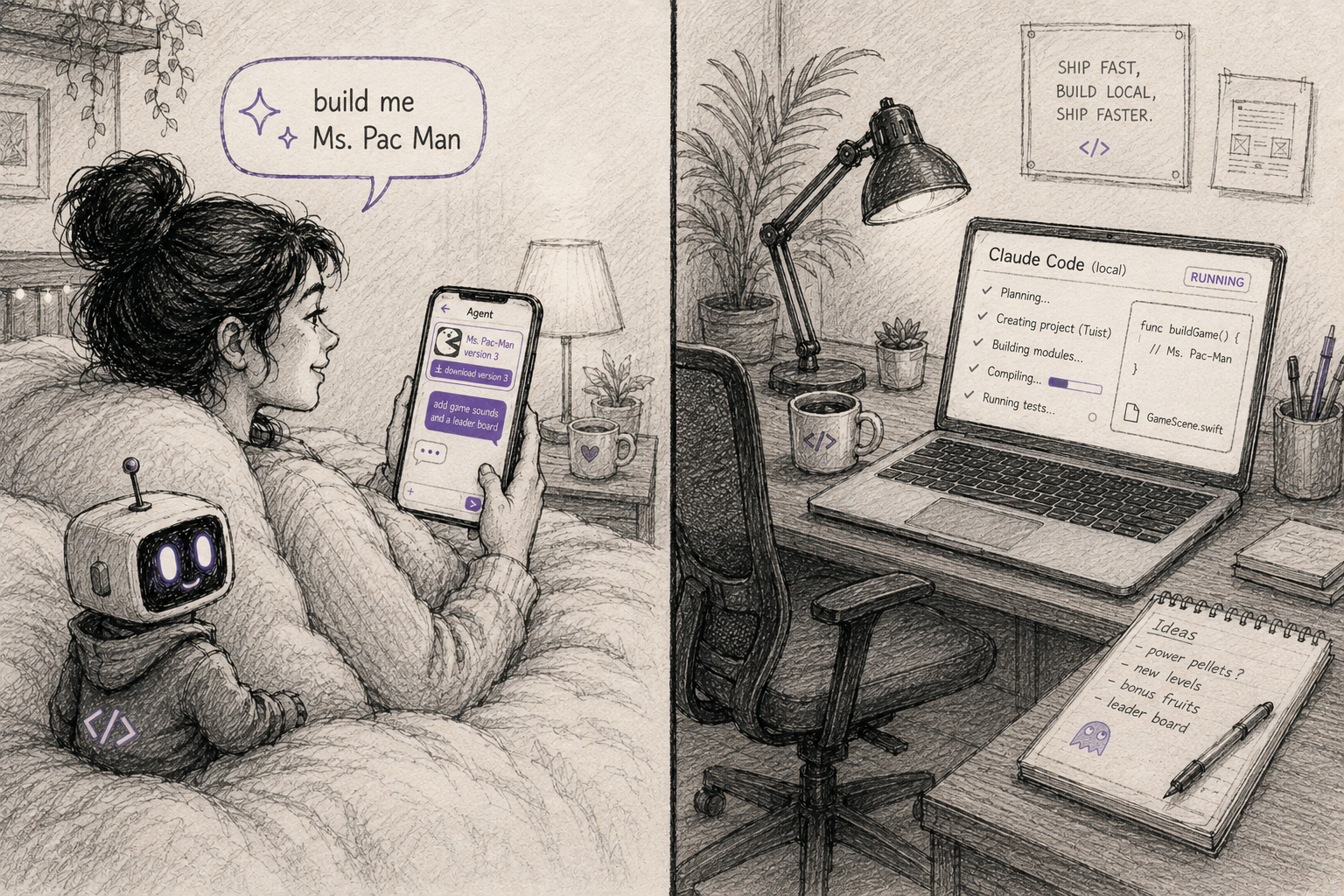

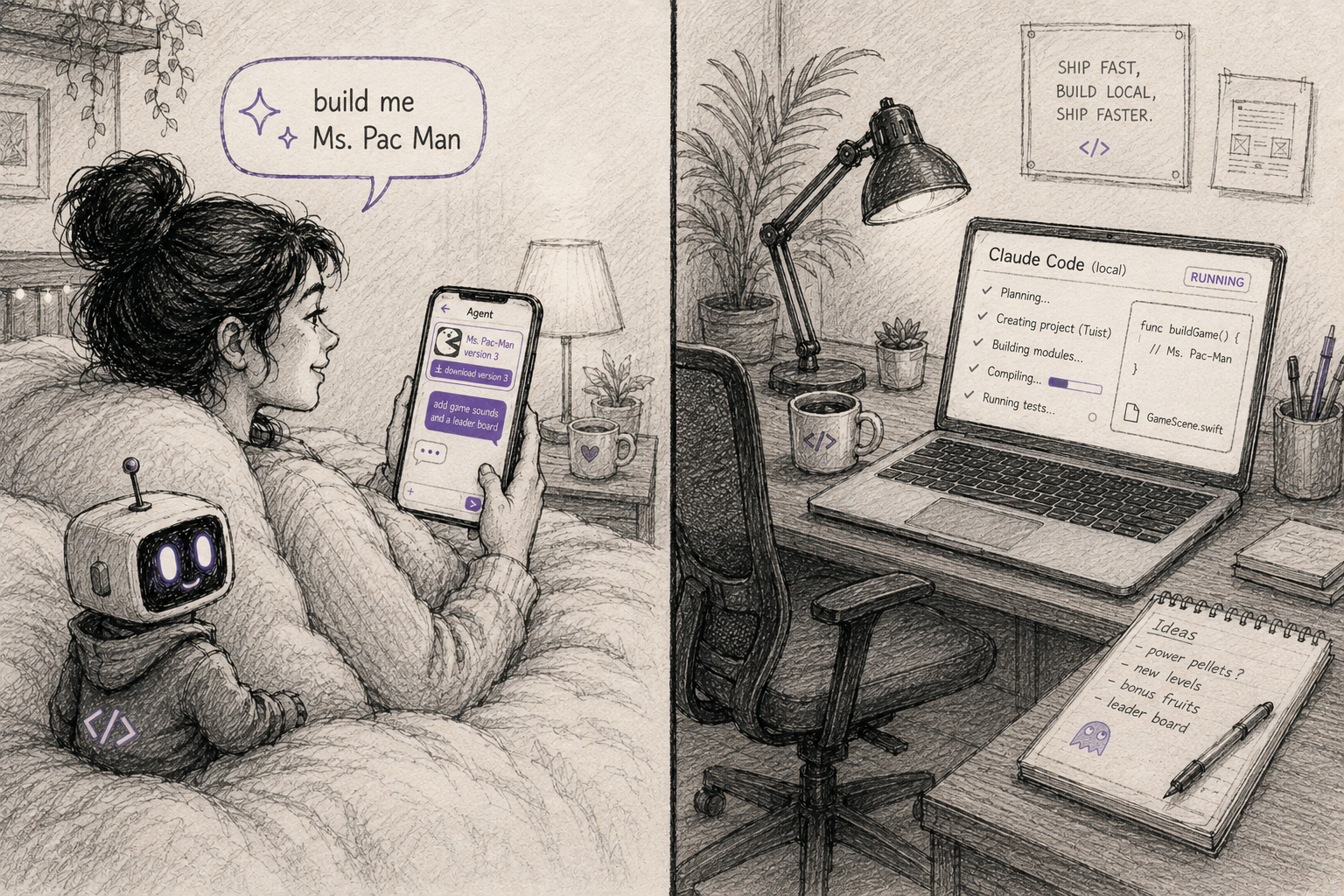

You'd open the chat app on your phone. Start a new project. Type "build me Ms Pac-Man." Watch the agent think about it — see the streaming response describing its understanding of the game, the architecture it would use, the design decisions. Watch tool use surface — the agent reading template files, writing new Swift files, running builds, debugging errors. The thinking blocks expand or collapse as you like. The Markdown renders cleanly. You can put the phone down. The Mac daemon keeps working. You come back, the build has succeeded, the app icon and name has appeared at the top of your chat, you tap download. Ms Pac-Man installs on your phone, complete with sounds, controls, and a working game loop.

Then you tell the agent it should have a high-score screen. Watch it work. Tap download again. The new version is on your phone.

The first time this worked end-to-end, I knew the MVP was real. The ability to create an app for your phone on your phone without leaving your bed.

The architectural decisions that made it work

A few choices were load-bearing in ways that aren't obvious until you try to build this kind of system.

Tuist instead of Xcode project files. Most attempts to have AI agents build iOS apps fail at the same point: the agent cannot reliably manage .xcodeproj files. They're proprietary, binary-ish, and brittle to manual edits. An agent that tries to add a dependency or restructure a target will frequently produce a project that won't open in Xcode. The solution most people reach for is "give the agent better tools for editing Xcode projects." The better solution is to not have an Xcode project the agent needs to manage at all.

Tuist generates Xcode projects from Swift configuration files. The agent works with Swift code — Package.swift, Project.swift, the actual application source. When the build needs to run, Tuist generates the Xcode project on demand. The agent never touches a .xcodeproj.

I'd already been building iOS apps with modular architecture (separate Swift packages for major subsystems, a thin app target that composes them) and Tuist was a clean fit. The agent received instructions on how to add features, when to extract new modules, what patterns to follow. The output was apps I'd actually want to maintain.

Claude Code stays on the developer's machine. Every developer using the system had their own Claude Code instance running locally, controlled by their own menubar daemon. The phone client didn't drive Claude Code directly; it submitted jobs that the developer's own machine picked up and executed.

This had several benefits beyond fitting within the available toolchain. Claude Code's context windows, file system access, and tool execution all stayed within the developer's environment. The phone became a thin client for what was still fundamentally local agentic work. The system didn't require trusting an external service to run code; it required trusting the developer's own machine, which is the trust boundary that already existed.

Firebase Realtime Database for live streaming, Firestore for persistence. Same split as the previous case study used. The realtime database carried the moment-to-moment activity — agent thinking, tool calls, partial responses, build status — where high-frequency writes and live reads mattered. Firestore stored the durable state — projects, conversation histories, build metadata — where structure and queryability mattered.

If the system had matured beyond MVP, the right next step would have been to make the daemon a deployable Mac service rather than a per-developer install. But for the prototype phase, per-developer daemons were both the right scope and the right security model.

The roadmap that could be

Multi-agent orchestration was the most interesting layer ahead. A project manager agent that took user requests and translated them into structured tasks for a coding agent. A reviewer agent — possibly using Codex — that could check the coding agent's work for correctness and suggest improvements. A design agent, potentially backed by tools like Google's Stitch for generating UI mockups and design systems with image generation. The pattern would have followed the same intellectual ground Anthropic, OpenAI, and others were exploring: structured agent roles, with clean handoffs between them, each specialized for the work it did best.

Skills and pre-built components for common patterns. Backend integration patterns — making it easy for generated apps to use Firebase, push notifications, in-app purchases, or other commonly-needed services.

What happened next

April 15th was the layoff. I demoed the MVP a day or two before to a small group of teammates. Ironically, April 17th, Anthropic released Claude Design — a related product working in adjacent intellectual territory. Glad they shipped a tool I'd want to use; happens to be the next direction the project I was working on was heading.

After Snap, I started over from scratch and ported the Claude Agent SDK to Swift again — this time better, no longer benefitting from access to the original work. Then I did the same thing for Codex, and for Cursor — three separate ports, each done independently from the ground up. The shared mac chat app I built lets me test all three agentic coding tools through one unified interface. The next step is finding the common patterns across them and contending honestly with the features that genuinely differ. Rather than use these for app creation, I plan to create a personal agentic media content generation system that connects with local GPUs that I own (RTX 6000 Pros). And of course the ideal is to be able to access the system from all of my devices.

There's also a project I want to build for myself: a Japanese learning agent that does what Duolingo doesn't — adult, engaging, and contextual to my actual life. Prompt it to practice a conversation I might actually need to have. Generate the audio, the media, the variations. Surface furigana, kanji, romaji, English translations as I choose what help I want. Living in Tokyo, learning Japanese is an ongoing project, and the tools to make it interesting can be built personally now in a way they couldn't before.

Related work

- The storytelling video platform case study covers the storytelling video editor whose macOS port initiated the deployment service work that became this project's foundation. The relationship runs both directions — that project's needs shaped the infrastructure; the infrastructure made this project possible.

An Agentic iOS App Creation System

May 17, 2026

A cross-platform AI video creation tool for storytelling with persistent characters who can talk.

Role: Design Engineer (proposer, architect, sole builder)

Timeframe: Early 2026 (approximately three weeks for the MVP, plus preceding deployment service infrastructure work)

Tech: Swift, SwiftUI, Claude Code, Claude Agent SDK (ported to Swift), MCP, Firebase (Realtime Database + Firestore), Tuist, Swift Package Manager, Go (deployment service)

TL;DR

- Built a working agentic iOS app creation system at Snap as a Design Engineer — users could chat with an agent from their phone to build, iterate on, and deploy real iOS app prototypes, then download builds directly to their device.

- Ported the Claude Agent SDK from TypeScript to Swift through agent-assisted dependency-graph analysis and milestone-tested execution, enabling the entire system to be built natively in Swift across iOS, macOS, and a developer-side menubar daemon.

- Architectural choices made the system tractable where similar approaches typically fail: keeping Claude Code execution local to each developer's machine, using Tuist instead of Xcode project files so the agent could manage modular Swift architecture, and building on an existing internal prototype deployment service I'd previously extended to support automatic updates.

The infrastructure that came first

This project started a few months earlier than it looks like it did.

Design engineering at Snap had its own tools for deploying prototypes — a service that produced QR-coded download links you could share with specific people. Useful, but limited. The links were undiscoverable to anyone you didn't share them with; the system occasionally broke; the website was basic. Originally built in Go by a design engineer who had since left, it stored build metadata in Firebase, organized by UUID.

I'd been using this service for the storytelling video editor (the iOS app described in the previous case study), and when an internal team requested a macOS version, I built one. Same SwiftUI codebase, different target. But this surfaced a follow-on problem: how would those users get updates? Bug fixes, model improvements, the inevitable churn of a tool under active development — none of it would reach them without an update mechanism.

So I extended the deployment service. The work that resulted ended up being more substantial than I'd initially scoped:

- Reorganized the server to organize builds by project rather than just UUID, with creators, versions, and per-version metadata

- Added macOS and Mac Catalyst support to the builder shell scripts, including the code-signing pipeline for CI

- Auto-extracted app icons during the build process and uploaded them to Firebase as version metadata (cached to avoid duplicates)

- Built a significantly improved web UI on top of the new server endpoints — projects became browsable, version histories visible, release notes supported in Markdown

- Added per-build visibility controls so authors could hide old or broken builds without deleting them

The piece that mattered most for what came next was a Swift Package for automatic updates. Any iOS or macOS app could integrate it; on launch or foreground, it would check the deployment service for newer versions of itself. If a newer version existed, the app could surface a UI for downloading and installing it. A shake gesture would bring up an install menu showing all available versions, including older ones — useful for testing regressions or going back to a known-good build.

Other engineers started integrating the auto-update package into their prototypes. Designers, newly equipped with Claude Code, had started building their own iOS prototypes and adopted the deployment service for sharing them. The improved discoverability of projects and version history made the whole system more useful as something people actually returned to, rather than a place to dump one-off builds.

The constraint

By early 2026, design engineering had identified a need to rapidly prototype standalone app concepts — building and iterating on small iOS apps as proof-of-concept investigations rather than features within Snapchat. The team needed velocity. Lots of prototypes, fast iteration, low ceremony.

Outside Snap, the obvious approach would have been to use one of the new agentic coding platforms — OpenClaw being the most-discussed at the time — tools that let you describe an app and have it built and iterated on through agent-driven coding. Several of these had launched recently and were dramatically compressing the time from idea to working app.

Inside Snap, OpenClaw and similar platforms weren't available for our use. We had access to Claude Code, newly approved as an internal coding tool, but the broader external agentic platforms that ran code in their own environments were off-limits.

This was the gap. The mandate said "ship app prototypes fast." The toolchain available was "Claude Code as a CLI on your laptop." There was real distance between what the team needed and what was available.

The move was to build the missing piece ourselves. Not by replicating external platforms, but by building something that operated within the available constraints: Claude Code running locally on each developer's laptop, an iOS/macOS chat client driving it, and the prototype deployment service handling builds and distribution. Everything would stay internal. Everything would respect the existing infrastructure boundaries. The agent execution would happen entirely on developer machines, not in any external service.

The premise was simple: chat with an agent from your phone, have it build and ship a real iOS app to that same phone, prototype an app idea from anywhere.

Porting the Claude Agent SDK to Swift

The system needed to be Swift end-to-end. The deployment service was Swift-friendly. The clients had to be SwiftUI. The developer-side daemon had to integrate with Apple platform services. A Node.js bridge would have worked but would have added significant operational complexity for a prototype.

The Claude Agent SDK existed only in TypeScript. So I ported it.

The approach was methodical. I used Claude to analyze the TypeScript SDK as a dependency graph — what code stood on its own, what depended on other modules, what touched the network, what was pure data manipulation. From that analysis came a multi-part porting plan with milestones. Each milestone included tests, and the build had to succeed and tests had to pass before moving to the next milestone. This let the port itself become an agentic project — I'd describe a milestone, Claude would execute it, the tests would validate it, and we'd move on.

The end result was a complete functional port of the SDK to Swift. Because the Swift ecosystem already had an MCP protocol implementation, real MCP integration came along for free — the ported SDK could speak to MCP servers natively without additional translation layers.

The three-part system

With the Swift Claude Agent SDK in hand, the system had three components.

The developer-side menubar daemon. A macOS menubar application that ran on each developer's laptop. This was the only component that had the Swift Claude Agent SDK and could control Claude Code. It observed a job queue in Firebase scoped to that specific developer — when a user submitted a request from their phone, the job arrived here. The daemon launched Claude with the appropriate project context, streamed responses back as they were generated, and managed the lifecycle of the build-and-deploy pipeline.

The daemon used Claude Code's streaming APIs end-to-end. Thinking events, tool use events, file edits, build output — everything flowed through Firebase realtime database as it happened, so the user could watch the agent work from their phone in real time. Tool approval modes mirrored Claude Code's own settings; you could configure how much autonomy the agent had per-project or per-conversation.

The iOS/macOS chat client. A SwiftUI app, primarily designed for phone usage but fully functional on Mac. The interface looked like a modern AI chat — message history, streaming responses, expandable thinking blocks, expandable tool-use visualizations, Markdown rendering. Users could create new app projects, switch between active projects, browse the full prototype library, and request iterations on existing builds.

The persistent UI affordance that tied everything together: at the top of every chat, the app being worked on was visible — its icon, its name, and an expandable disclosure that surfaced the current build status, version history, and a one-tap download for the latest build. After the first successful build of a new app, this card became how you knew the system was actually doing the thing. The agent finished. The build deployed. The icon appeared at the top of your chat. You tapped download. The app installed on your phone. You opened it.

The persistence mattered because you could work on multiple apps at once. Each conversation belonged to its own app project; the card at the top kept you oriented. The app icons that the deployment service had been extracting and caching for months were finally visible in the UI they'd been built for.

The deployment service extension. Beyond the existing work, two pieces specifically supported the agentic system:

The Swift Package for automatic updates became how iterations propagated. When the agent shipped a new build, the user already had the previous version installed; the auto-update package detected the new version and offered to install it. The "rapid iteration" loop — request a change, watch the agent work, install the new build, test it, request another change — depended entirely on this automatic-update path.

The project structure was templated. When the agent created a new app, it didn't generate an Xcode project from scratch — it started from a template directory with the appropriate Tuist configuration, initial Swift Package structure, deployment service integration, and Git initialization already in place. The agent's job was filling in the app's actual functionality, not figuring out Apple's tooling.

What it felt like to use

Using the MVP was, in some sense, the case study in compressed form.

You'd open the chat app on your phone. Start a new project. Type "build me Ms Pac-Man." Watch the agent think about it — see the streaming response describing its understanding of the game, the architecture it would use, the design decisions. Watch tool use surface — the agent reading template files, writing new Swift files, running builds, debugging errors. The thinking blocks expand or collapse as you like. The Markdown renders cleanly. You can put the phone down. The Mac daemon keeps working. You come back, the build has succeeded, the app icon and name has appeared at the top of your chat, you tap download. Ms Pac-Man installs on your phone, complete with sounds, controls, and a working game loop.

Then you tell the agent it should have a high-score screen. Watch it work. Tap download again. The new version is on your phone.

The first time this worked end-to-end, I knew the MVP was real. The ability to create an app for your phone on your phone without leaving your bed.

The architectural decisions that made it work

A few choices were load-bearing in ways that aren't obvious until you try to build this kind of system.

Tuist instead of Xcode project files. Most attempts to have AI agents build iOS apps fail at the same point: the agent cannot reliably manage .xcodeproj files. They're proprietary, binary-ish, and brittle to manual edits. An agent that tries to add a dependency or restructure a target will frequently produce a project that won't open in Xcode. The solution most people reach for is "give the agent better tools for editing Xcode projects." The better solution is to not have an Xcode project the agent needs to manage at all.

Tuist generates Xcode projects from Swift configuration files. The agent works with Swift code — Package.swift, Project.swift, the actual application source. When the build needs to run, Tuist generates the Xcode project on demand. The agent never touches a .xcodeproj.

I'd already been building iOS apps with modular architecture (separate Swift packages for major subsystems, a thin app target that composes them) and Tuist was a clean fit. The agent received instructions on how to add features, when to extract new modules, what patterns to follow. The output was apps I'd actually want to maintain.

Claude Code stays on the developer's machine. Every developer using the system had their own Claude Code instance running locally, controlled by their own menubar daemon. The phone client didn't drive Claude Code directly; it submitted jobs that the developer's own machine picked up and executed.

This had several benefits beyond fitting within the available toolchain. Claude Code's context windows, file system access, and tool execution all stayed within the developer's environment. The phone became a thin client for what was still fundamentally local agentic work. The system didn't require trusting an external service to run code; it required trusting the developer's own machine, which is the trust boundary that already existed.

Firebase Realtime Database for live streaming, Firestore for persistence. Same split as the previous case study used. The realtime database carried the moment-to-moment activity — agent thinking, tool calls, partial responses, build status — where high-frequency writes and live reads mattered. Firestore stored the durable state — projects, conversation histories, build metadata — where structure and queryability mattered.

If the system had matured beyond MVP, the right next step would have been to make the daemon a deployable Mac service rather than a per-developer install. But for the prototype phase, per-developer daemons were both the right scope and the right security model.

The roadmap that could be

Multi-agent orchestration was the most interesting layer ahead. A project manager agent that took user requests and translated them into structured tasks for a coding agent. A reviewer agent — possibly using Codex — that could check the coding agent's work for correctness and suggest improvements. A design agent, potentially backed by tools like Google's Stitch for generating UI mockups and design systems with image generation. The pattern would have followed the same intellectual ground Anthropic, OpenAI, and others were exploring: structured agent roles, with clean handoffs between them, each specialized for the work it did best.

Skills and pre-built components for common patterns. Backend integration patterns — making it easy for generated apps to use Firebase, push notifications, in-app purchases, or other commonly-needed services.

What happened next

April 15th was the layoff. I demoed the MVP a day or two before to a small group of teammates. Ironically, April 17th, Anthropic released Claude Design — a related product working in adjacent intellectual territory. Glad they shipped a tool I'd want to use; happens to be the next direction the project I was working on was heading.

After Snap, I started over from scratch and ported the Claude Agent SDK to Swift again — this time better, no longer benefitting from access to the original work. Then I did the same thing for Codex, and for Cursor — three separate ports, each done independently from the ground up. The shared mac chat app I built lets me test all three agentic coding tools through one unified interface. The next step is finding the common patterns across them and contending honestly with the features that genuinely differ. Rather than use these for app creation, I plan to create a personal agentic media content generation system that connects with local GPUs that I own (RTX 6000 Pros). And of course the ideal is to be able to access the system from all of my devices.

There's also a project I want to build for myself: a Japanese learning agent that does what Duolingo doesn't — adult, engaging, and contextual to my actual life. Prompt it to practice a conversation I might actually need to have. Generate the audio, the media, the variations. Surface furigana, kanji, romaji, English translations as I choose what help I want. Living in Tokyo, learning Japanese is an ongoing project, and the tools to make it interesting can be built personally now in a way they couldn't before.

Related work

- The storytelling video platform case study covers the storytelling video editor whose macOS port initiated the deployment service work that became this project's foundation. The relationship runs both directions — that project's needs shaped the infrastructure; the infrastructure made this project possible.

An Agentic iOS App Creation System

May 17, 2026

A cross-platform AI video creation tool for storytelling with persistent characters who can talk.

Role: Design Engineer (proposer, architect, sole builder)

Timeframe: Early 2026 (approximately three weeks for the MVP, plus preceding deployment service infrastructure work)

Tech: Swift, SwiftUI, Claude Code, Claude Agent SDK (ported to Swift), MCP, Firebase (Realtime Database + Firestore), Tuist, Swift Package Manager, Go (deployment service)

TL;DR

- Built a working agentic iOS app creation system at Snap as a Design Engineer — users could chat with an agent from their phone to build, iterate on, and deploy real iOS app prototypes, then download builds directly to their device.

- Ported the Claude Agent SDK from TypeScript to Swift through agent-assisted dependency-graph analysis and milestone-tested execution, enabling the entire system to be built natively in Swift across iOS, macOS, and a developer-side menubar daemon.

- Architectural choices made the system tractable where similar approaches typically fail: keeping Claude Code execution local to each developer's machine, using Tuist instead of Xcode project files so the agent could manage modular Swift architecture, and building on an existing internal prototype deployment service I'd previously extended to support automatic updates.

The infrastructure that came first

This project started a few months earlier than it looks like it did.

Design engineering at Snap had its own tools for deploying prototypes — a service that produced QR-coded download links you could share with specific people. Useful, but limited. The links were undiscoverable to anyone you didn't share them with; the system occasionally broke; the website was basic. Originally built in Go by a design engineer who had since left, it stored build metadata in Firebase, organized by UUID.

I'd been using this service for the storytelling video editor (the iOS app described in the previous case study), and when an internal team requested a macOS version, I built one. Same SwiftUI codebase, different target. But this surfaced a follow-on problem: how would those users get updates? Bug fixes, model improvements, the inevitable churn of a tool under active development — none of it would reach them without an update mechanism.

So I extended the deployment service. The work that resulted ended up being more substantial than I'd initially scoped:

- Reorganized the server to organize builds by project rather than just UUID, with creators, versions, and per-version metadata

- Added macOS and Mac Catalyst support to the builder shell scripts, including the code-signing pipeline for CI

- Auto-extracted app icons during the build process and uploaded them to Firebase as version metadata (cached to avoid duplicates)

- Built a significantly improved web UI on top of the new server endpoints — projects became browsable, version histories visible, release notes supported in Markdown

- Added per-build visibility controls so authors could hide old or broken builds without deleting them

The piece that mattered most for what came next was a Swift Package for automatic updates. Any iOS or macOS app could integrate it; on launch or foreground, it would check the deployment service for newer versions of itself. If a newer version existed, the app could surface a UI for downloading and installing it. A shake gesture would bring up an install menu showing all available versions, including older ones — useful for testing regressions or going back to a known-good build.

Other engineers started integrating the auto-update package into their prototypes. Designers, newly equipped with Claude Code, had started building their own iOS prototypes and adopted the deployment service for sharing them. The improved discoverability of projects and version history made the whole system more useful as something people actually returned to, rather than a place to dump one-off builds.

The constraint

By early 2026, design engineering had identified a need to rapidly prototype standalone app concepts — building and iterating on small iOS apps as proof-of-concept investigations rather than features within Snapchat. The team needed velocity. Lots of prototypes, fast iteration, low ceremony.

Outside Snap, the obvious approach would have been to use one of the new agentic coding platforms — OpenClaw being the most-discussed at the time — tools that let you describe an app and have it built and iterated on through agent-driven coding. Several of these had launched recently and were dramatically compressing the time from idea to working app.

Inside Snap, OpenClaw and similar platforms weren't available for our use. We had access to Claude Code, newly approved as an internal coding tool, but the broader external agentic platforms that ran code in their own environments were off-limits.

This was the gap. The mandate said "ship app prototypes fast." The toolchain available was "Claude Code as a CLI on your laptop." There was real distance between what the team needed and what was available.

The move was to build the missing piece ourselves. Not by replicating external platforms, but by building something that operated within the available constraints: Claude Code running locally on each developer's laptop, an iOS/macOS chat client driving it, and the prototype deployment service handling builds and distribution. Everything would stay internal. Everything would respect the existing infrastructure boundaries. The agent execution would happen entirely on developer machines, not in any external service.

The premise was simple: chat with an agent from your phone, have it build and ship a real iOS app to that same phone, prototype an app idea from anywhere.

Porting the Claude Agent SDK to Swift

The system needed to be Swift end-to-end. The deployment service was Swift-friendly. The clients had to be SwiftUI. The developer-side daemon had to integrate with Apple platform services. A Node.js bridge would have worked but would have added significant operational complexity for a prototype.

The Claude Agent SDK existed only in TypeScript. So I ported it.

The approach was methodical. I used Claude to analyze the TypeScript SDK as a dependency graph — what code stood on its own, what depended on other modules, what touched the network, what was pure data manipulation. From that analysis came a multi-part porting plan with milestones. Each milestone included tests, and the build had to succeed and tests had to pass before moving to the next milestone. This let the port itself become an agentic project — I'd describe a milestone, Claude would execute it, the tests would validate it, and we'd move on.

The end result was a complete functional port of the SDK to Swift. Because the Swift ecosystem already had an MCP protocol implementation, real MCP integration came along for free — the ported SDK could speak to MCP servers natively without additional translation layers.

The three-part system

With the Swift Claude Agent SDK in hand, the system had three components.

The developer-side menubar daemon. A macOS menubar application that ran on each developer's laptop. This was the only component that had the Swift Claude Agent SDK and could control Claude Code. It observed a job queue in Firebase scoped to that specific developer — when a user submitted a request from their phone, the job arrived here. The daemon launched Claude with the appropriate project context, streamed responses back as they were generated, and managed the lifecycle of the build-and-deploy pipeline.

The daemon used Claude Code's streaming APIs end-to-end. Thinking events, tool use events, file edits, build output — everything flowed through Firebase realtime database as it happened, so the user could watch the agent work from their phone in real time. Tool approval modes mirrored Claude Code's own settings; you could configure how much autonomy the agent had per-project or per-conversation.

The iOS/macOS chat client. A SwiftUI app, primarily designed for phone usage but fully functional on Mac. The interface looked like a modern AI chat — message history, streaming responses, expandable thinking blocks, expandable tool-use visualizations, Markdown rendering. Users could create new app projects, switch between active projects, browse the full prototype library, and request iterations on existing builds.

The persistent UI affordance that tied everything together: at the top of every chat, the app being worked on was visible — its icon, its name, and an expandable disclosure that surfaced the current build status, version history, and a one-tap download for the latest build. After the first successful build of a new app, this card became how you knew the system was actually doing the thing. The agent finished. The build deployed. The icon appeared at the top of your chat. You tapped download. The app installed on your phone. You opened it.

The persistence mattered because you could work on multiple apps at once. Each conversation belonged to its own app project; the card at the top kept you oriented. The app icons that the deployment service had been extracting and caching for months were finally visible in the UI they'd been built for.

The deployment service extension. Beyond the existing work, two pieces specifically supported the agentic system:

The Swift Package for automatic updates became how iterations propagated. When the agent shipped a new build, the user already had the previous version installed; the auto-update package detected the new version and offered to install it. The "rapid iteration" loop — request a change, watch the agent work, install the new build, test it, request another change — depended entirely on this automatic-update path.

The project structure was templated. When the agent created a new app, it didn't generate an Xcode project from scratch — it started from a template directory with the appropriate Tuist configuration, initial Swift Package structure, deployment service integration, and Git initialization already in place. The agent's job was filling in the app's actual functionality, not figuring out Apple's tooling.

What it felt like to use

Using the MVP was, in some sense, the case study in compressed form.

You'd open the chat app on your phone. Start a new project. Type "build me Ms Pac-Man." Watch the agent think about it — see the streaming response describing its understanding of the game, the architecture it would use, the design decisions. Watch tool use surface — the agent reading template files, writing new Swift files, running builds, debugging errors. The thinking blocks expand or collapse as you like. The Markdown renders cleanly. You can put the phone down. The Mac daemon keeps working. You come back, the build has succeeded, the app icon and name has appeared at the top of your chat, you tap download. Ms Pac-Man installs on your phone, complete with sounds, controls, and a working game loop.

Then you tell the agent it should have a high-score screen. Watch it work. Tap download again. The new version is on your phone.

The first time this worked end-to-end, I knew the MVP was real. The ability to create an app for your phone on your phone without leaving your bed.

The architectural decisions that made it work

A few choices were load-bearing in ways that aren't obvious until you try to build this kind of system.

Tuist instead of Xcode project files. Most attempts to have AI agents build iOS apps fail at the same point: the agent cannot reliably manage .xcodeproj files. They're proprietary, binary-ish, and brittle to manual edits. An agent that tries to add a dependency or restructure a target will frequently produce a project that won't open in Xcode. The solution most people reach for is "give the agent better tools for editing Xcode projects." The better solution is to not have an Xcode project the agent needs to manage at all.

Tuist generates Xcode projects from Swift configuration files. The agent works with Swift code — Package.swift, Project.swift, the actual application source. When the build needs to run, Tuist generates the Xcode project on demand. The agent never touches a .xcodeproj.

I'd already been building iOS apps with modular architecture (separate Swift packages for major subsystems, a thin app target that composes them) and Tuist was a clean fit. The agent received instructions on how to add features, when to extract new modules, what patterns to follow. The output was apps I'd actually want to maintain.

Claude Code stays on the developer's machine. Every developer using the system had their own Claude Code instance running locally, controlled by their own menubar daemon. The phone client didn't drive Claude Code directly; it submitted jobs that the developer's own machine picked up and executed.

This had several benefits beyond fitting within the available toolchain. Claude Code's context windows, file system access, and tool execution all stayed within the developer's environment. The phone became a thin client for what was still fundamentally local agentic work. The system didn't require trusting an external service to run code; it required trusting the developer's own machine, which is the trust boundary that already existed.

Firebase Realtime Database for live streaming, Firestore for persistence. Same split as the previous case study used. The realtime database carried the moment-to-moment activity — agent thinking, tool calls, partial responses, build status — where high-frequency writes and live reads mattered. Firestore stored the durable state — projects, conversation histories, build metadata — where structure and queryability mattered.

If the system had matured beyond MVP, the right next step would have been to make the daemon a deployable Mac service rather than a per-developer install. But for the prototype phase, per-developer daemons were both the right scope and the right security model.

The roadmap that could be

Multi-agent orchestration was the most interesting layer ahead. A project manager agent that took user requests and translated them into structured tasks for a coding agent. A reviewer agent — possibly using Codex — that could check the coding agent's work for correctness and suggest improvements. A design agent, potentially backed by tools like Google's Stitch for generating UI mockups and design systems with image generation. The pattern would have followed the same intellectual ground Anthropic, OpenAI, and others were exploring: structured agent roles, with clean handoffs between them, each specialized for the work it did best.

Skills and pre-built components for common patterns. Backend integration patterns — making it easy for generated apps to use Firebase, push notifications, in-app purchases, or other commonly-needed services.

What happened next

April 15th was the layoff. I demoed the MVP a day or two before to a small group of teammates. Ironically, April 17th, Anthropic released Claude Design — a related product working in adjacent intellectual territory. Glad they shipped a tool I'd want to use; happens to be the next direction the project I was working on was heading.

After Snap, I started over from scratch and ported the Claude Agent SDK to Swift again — this time better, no longer benefitting from access to the original work. Then I did the same thing for Codex, and for Cursor — three separate ports, each done independently from the ground up. The shared mac chat app I built lets me test all three agentic coding tools through one unified interface. The next step is finding the common patterns across them and contending honestly with the features that genuinely differ. Rather than use these for app creation, I plan to create a personal agentic media content generation system that connects with local GPUs that I own (RTX 6000 Pros). And of course the ideal is to be able to access the system from all of my devices.

There's also a project I want to build for myself: a Japanese learning agent that does what Duolingo doesn't — adult, engaging, and contextual to my actual life. Prompt it to practice a conversation I might actually need to have. Generate the audio, the media, the variations. Surface furigana, kanji, romaji, English translations as I choose what help I want. Living in Tokyo, learning Japanese is an ongoing project, and the tools to make it interesting can be built personally now in a way they couldn't before.

Related work

- The storytelling video platform case study covers the storytelling video editor whose macOS port initiated the deployment service work that became this project's foundation. The relationship runs both directions — that project's needs shaped the infrastructure; the infrastructure made this project possible.